Introduction

The direct motivation for this post is a very unusual piece of news: one of the key authors of a new memory framework for LLM agents called MemPalace is… Milla Jovovich. Leeloo from The Fifth Element and Alice from Resident Evil have taken up vibe-coding. It does sound more than a little bit cringy, but let us set aside skepticism for a moment, because the underlying topic is serious.

Memory for LLM agents is one of the most interesting—and also most painful!—problems of 2025-2026. There are many approaches, and as the old engineering adage goes, if a problem has twenty different solutions, it means none of them really work. So I will use the news hook to survey the entire field, which has accumulated a lot of interesting developments over the past year.

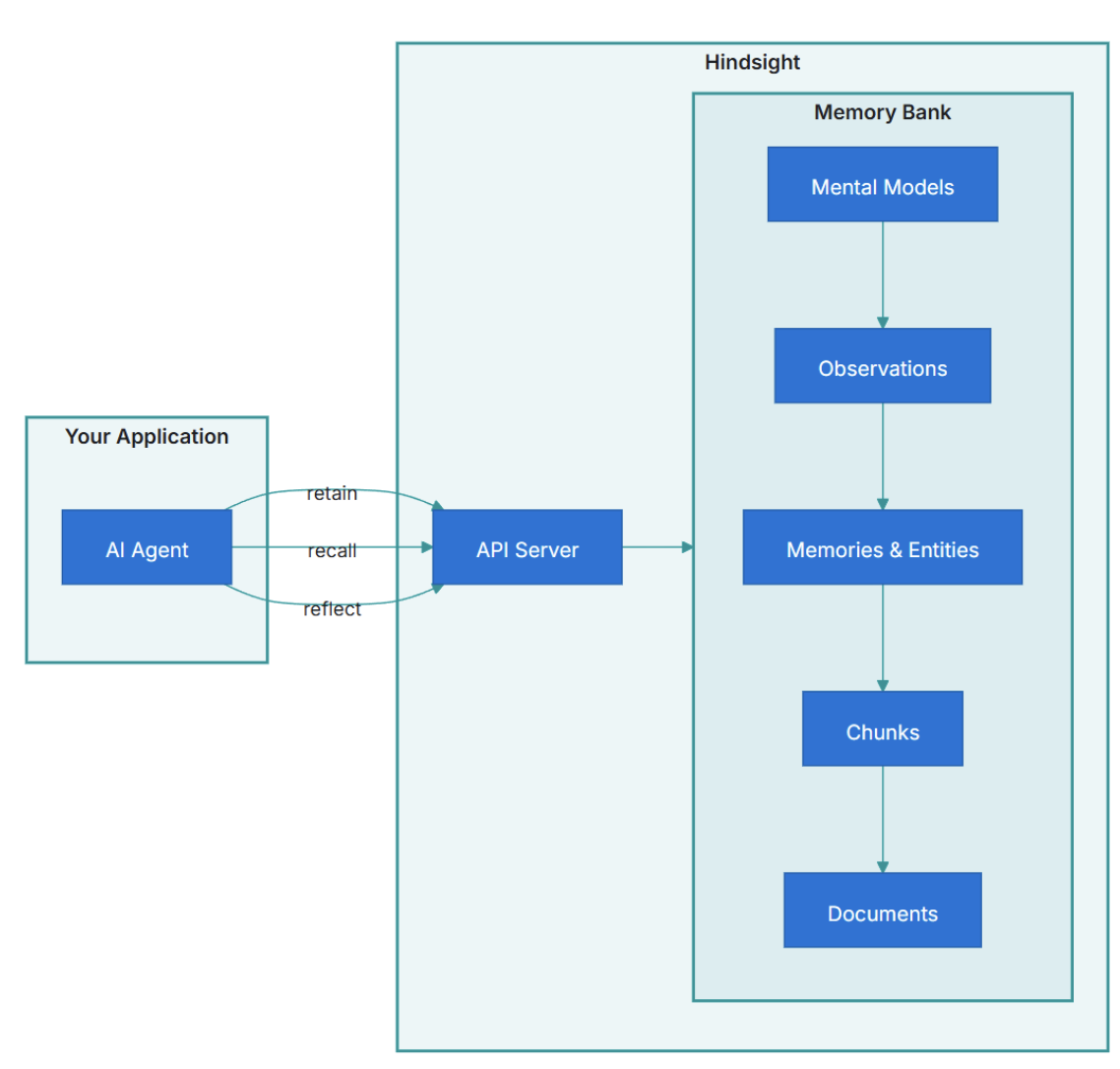

Let me warn you up front that my verdict on MemPalace will be rather modest (spoiler: its best results come from a mode that never uses the “memory palace” at all). The tool I consider the current best for agent memory is Hindsight (Latimer et al., 2025, GitHub).

But let us start from the beginning: in this post, we will figure out what “memory” even means for an LLM-based agent, how people classify different types of memory, and why it has suddenly become one of the most interesting subproblems in applied AI. Here is a meta-list, a survey of surveys:

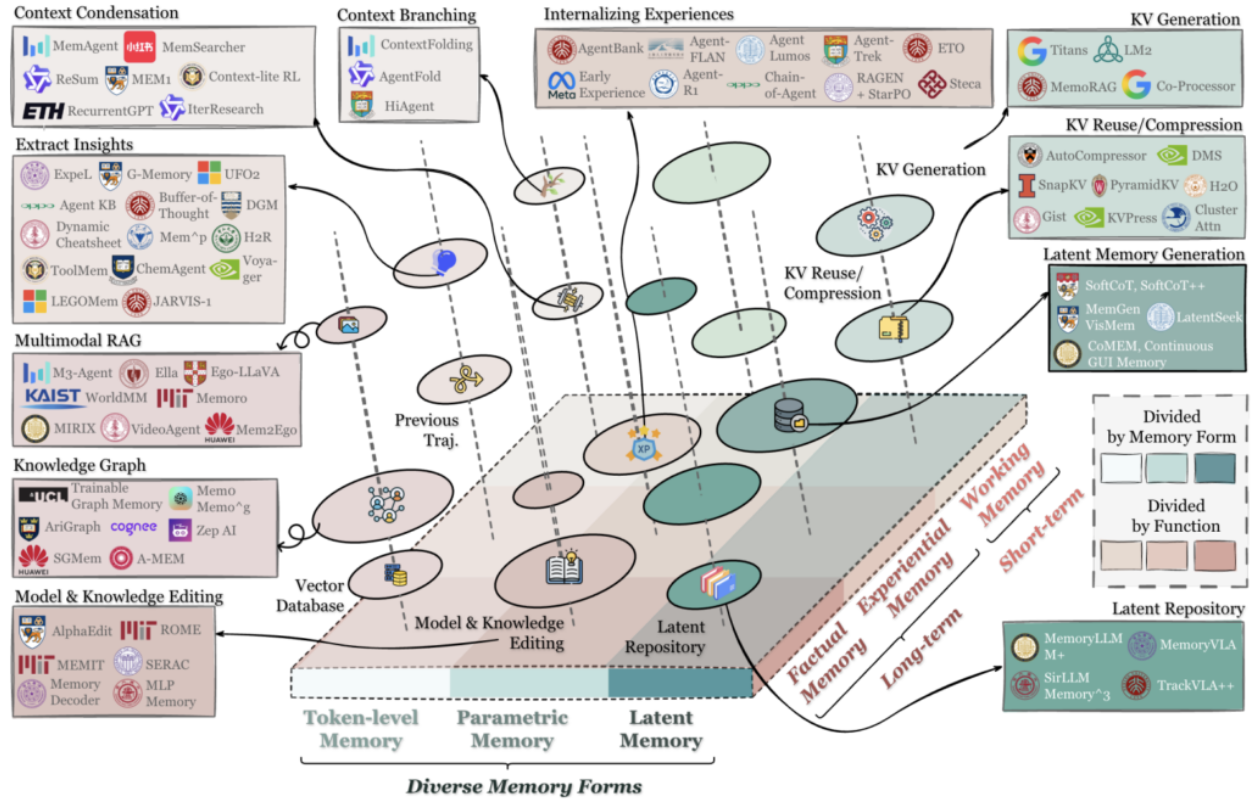

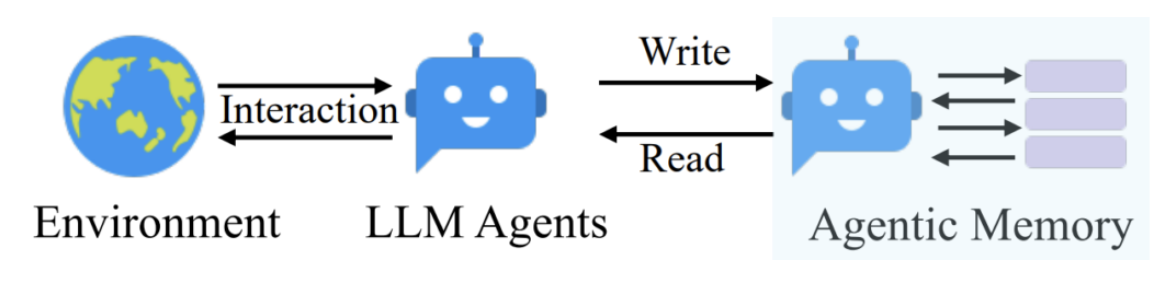

- Memory in the Age of AI Agents (Hu et al., 2025) — my main source, a 47-author survey from December 2025. It proposes a taxonomy along three orthogonal axes: forms (token-level / parametric / latent), functions (factual / experiential / working), and dynamics (formation / evolution / retrieval), with separate sections on benchmarks, open-source frameworks, and frontier directions.

- Anatomy of Agentic Memory (Jiang et al., 2026) — a February 2026 survey emphasizing empirical evaluation pitfalls: benchmark saturation, sensitivity to judge models, backbone dependence, and so on. It helps explain why numbers reported in papers are often inflated even without any malicious intent.

- Cognitive Architectures for Language Agents (CoALA; Sumers et al., 2024) — the classic, heavily-cited paper that looks at memory from a neuropsychological angle. Often referenced as the "default" taxonomy for agent memory.

- A Survey on the Memory Mechanism of Large Language Model based Agents (Zhang et al., 2024) — probably the earliest systematic survey of agent memory; outdated but useful to understand how the problem formulation evolved.

Here is the structure of Hu et al., (2025); we won’t reach this kind of detail in this post, but we will try to get close:

Why LLM Agents Need Memory At All

The first question that comes up when you start talking about memory for LLM agents is: why bother? Context windows have grown to a million tokens, sometimes two million. Sure, you cannot fit the entire Internet in there, but RAG (retrieval-augmented generation) has been around for a long time. Can’t we not just stuff all the RAG results into that million-token context and call it a day?

Not quite, for several reasons.

First, long contexts degrade. There is the famous “lost-in-the-middle” effect (Liu et al., 2023): models remember the beginning and the end of their context well, but the middle gets fuzzy. The longer the context, the worse this interference becomes: the model will start confusing facts, forgetting what it “learned” a hundred pages ago, and generally behaving unpredictably.

An analogy I find useful is a student trying to cram for an exam by reading a 500-page textbook in a day. They will remember the first chapter (it was fresh and new) and the last chapter (it is still in short-term memory), but everything in the middle will blur together. That is essentially what happens to an LLM with a very long context window. Having the capacity to hold a million tokens does not mean the model can actually use all of them effectively.

Second, it’s expensive. Not just in dollars (though that too): processing 200K tokens on every turn of a long dialogue is wasteful. But it is also expensive in latency since you are essentially forcing the model to reread its entire memory for every single new token, and the computational cost of attention grows faster than linearly with context length. Formally it is quadratic, though million-token contexts imply various tricks that bring this down.

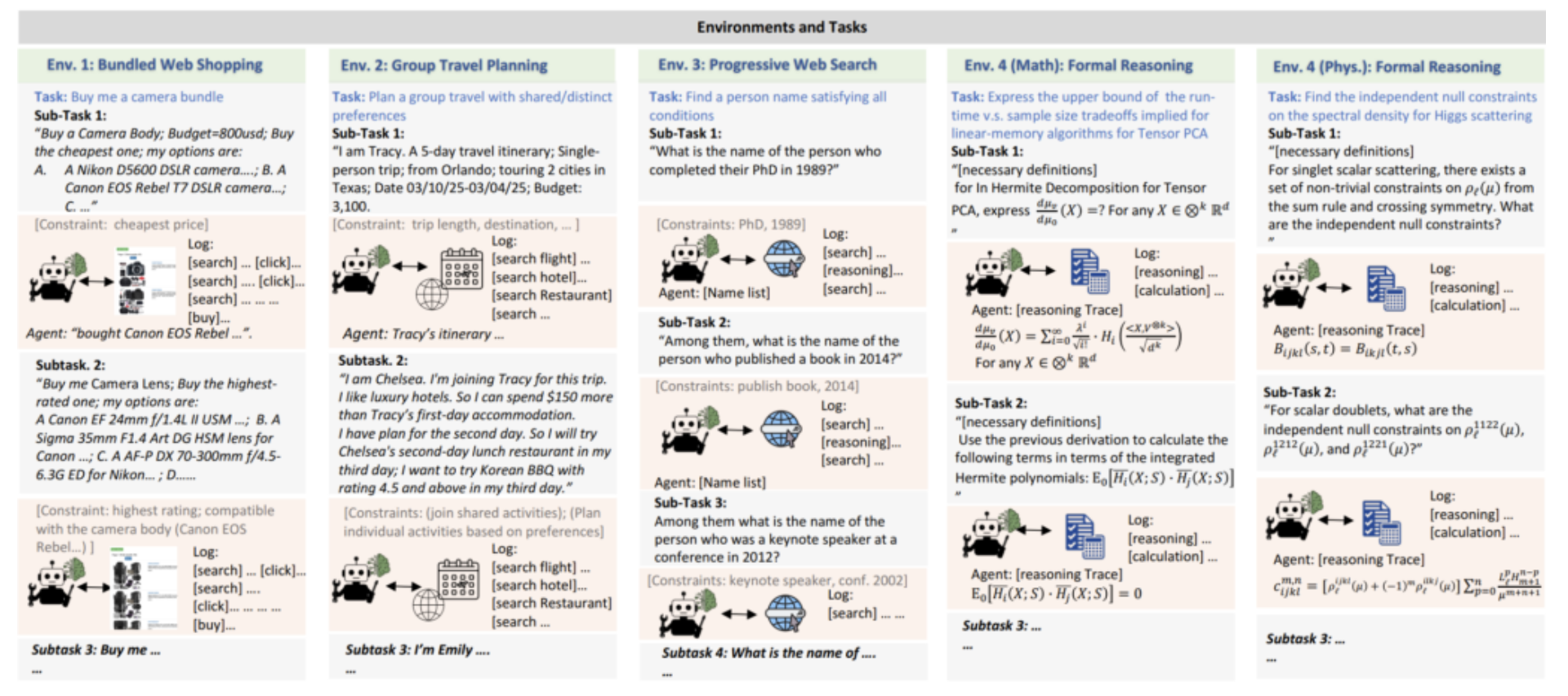

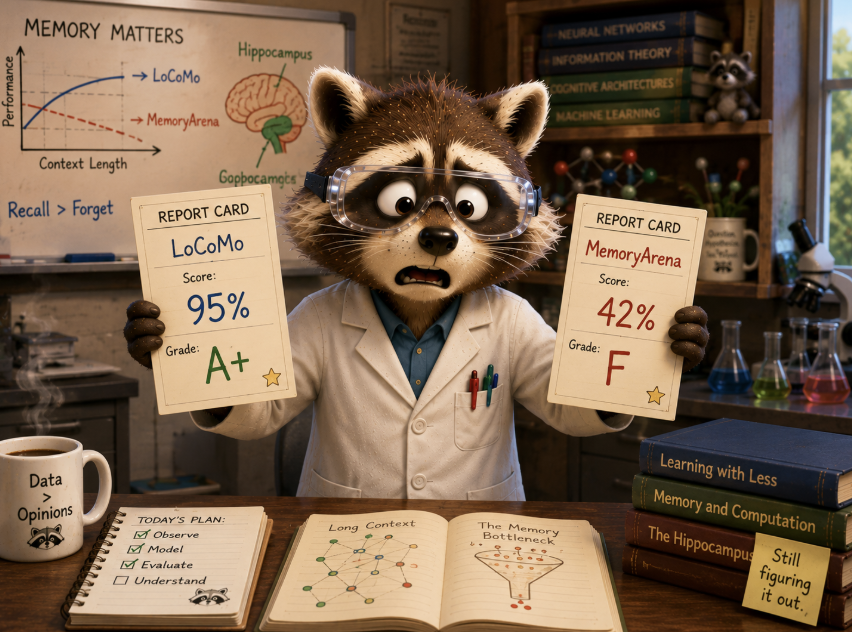

Third—and this is the most interesting part—there is a benchmark-to-deployment gap. The authors of MemoryArena (He et al., 2026) took memory systems that score near-perfectly on standard benchmarks (LoCoMo, LongMemEval) and plugged them into real agent tasks: web navigation, constrained planning, sequential reasoning.

Here are some sample problems:

And as a result, systems that scored ~95% on LoCoMo dropped to 40–60% on MemoryArena! It appears there is a meaningful difference between “recalling a fact” and “recalling a fact in a way that supports decision-making”, that is, between passive recall and active use. Humans experience this gap too, by the way: if you ask a former high-school student to state the Pythagorean theorem, they will probably answer it easily, but a test that asks them to calculate how many rolls of wallpaper to buy for a triangular room will have many more people completely lost. Same knowledge, very different application.

Fourth, there are engineering concerns: proper memory must be updatable. If an agent recorded two months ago that the user lives in Berlin, and yesterday the user moved to Lisbon, what happens to the Berlin fact? Delete it? Update it? Keep it with a “stale” label?

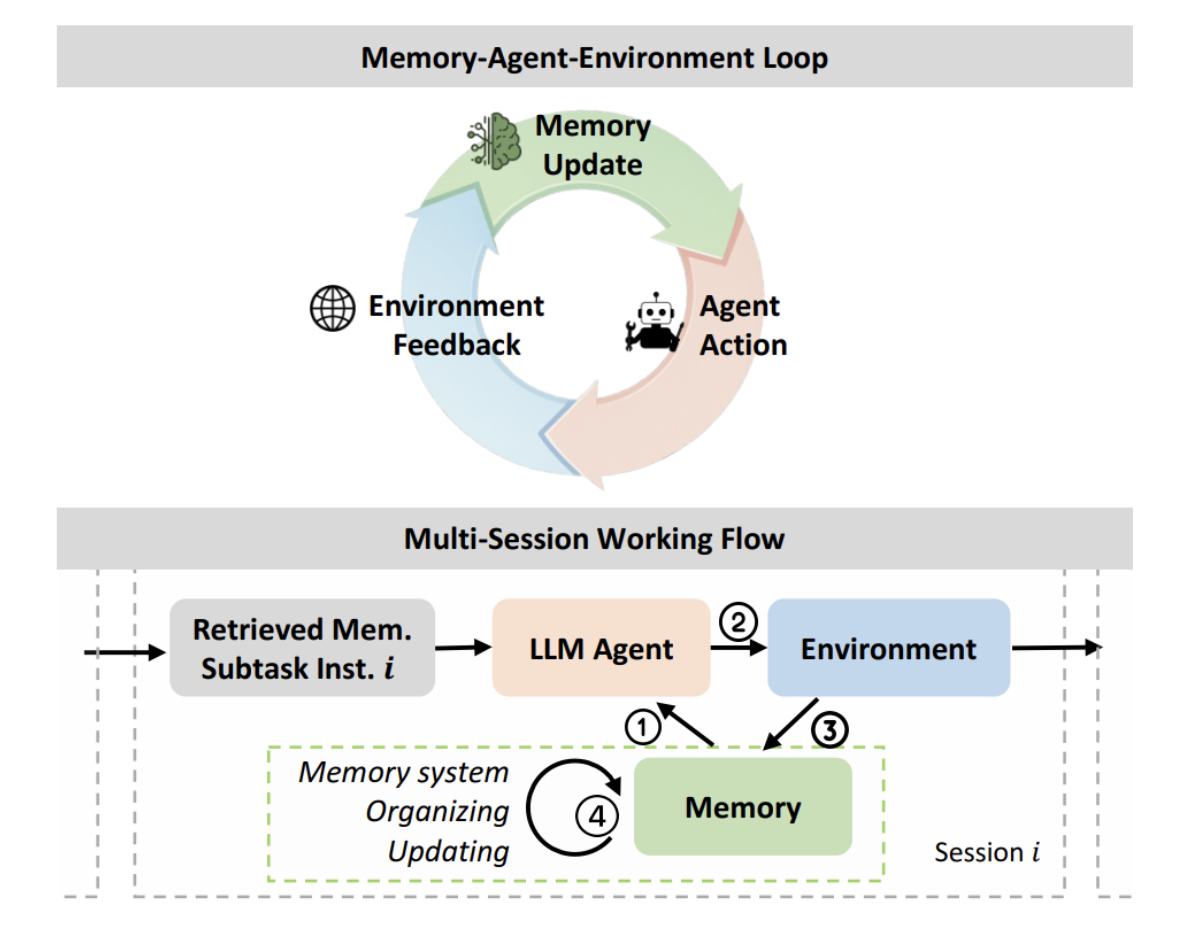

In other words, memory for an agent cannot just be a long log or a database in the ordinary sense. It has to be a system that answers many different questions at once: what to store, how to store it, how to update, what to retrieve on a given query, how to merge, how to flag contradictions, when to forget, and so on.

Taxonomies: How to Think About the Structure of Agent Memory

Before we look at specific systems, let us impose a bit of structure. There are at least three parallel classification schemes for agent memory.

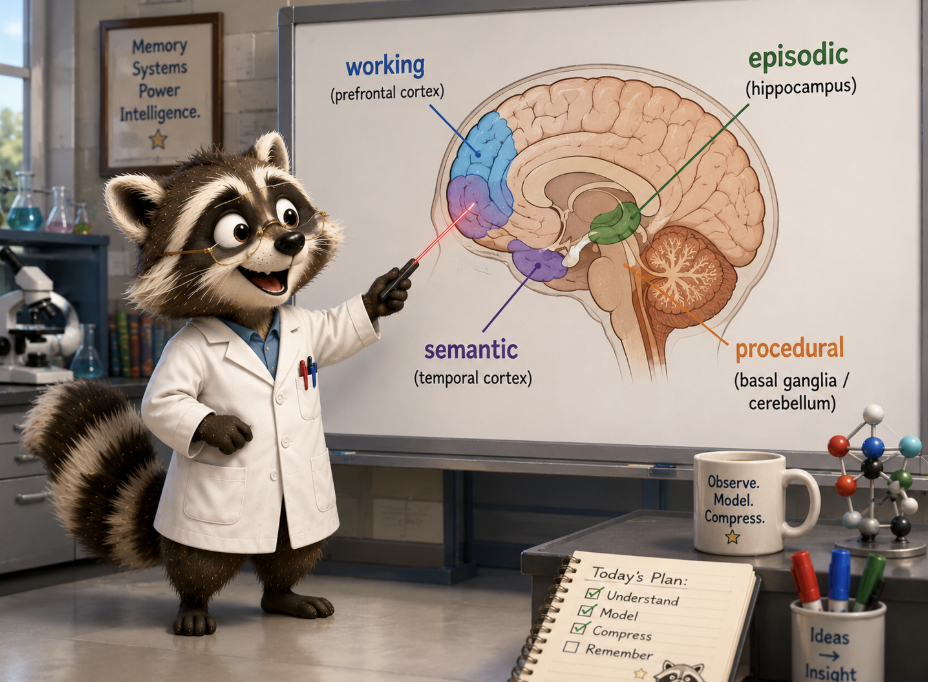

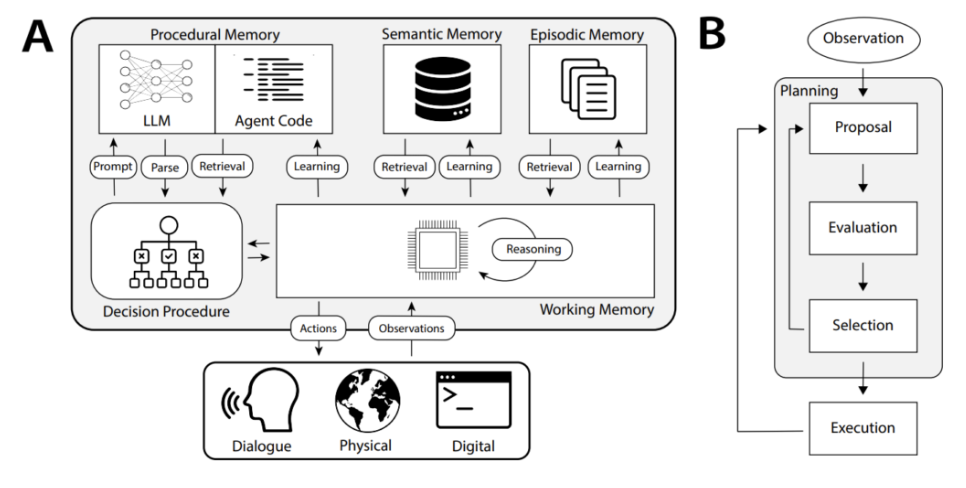

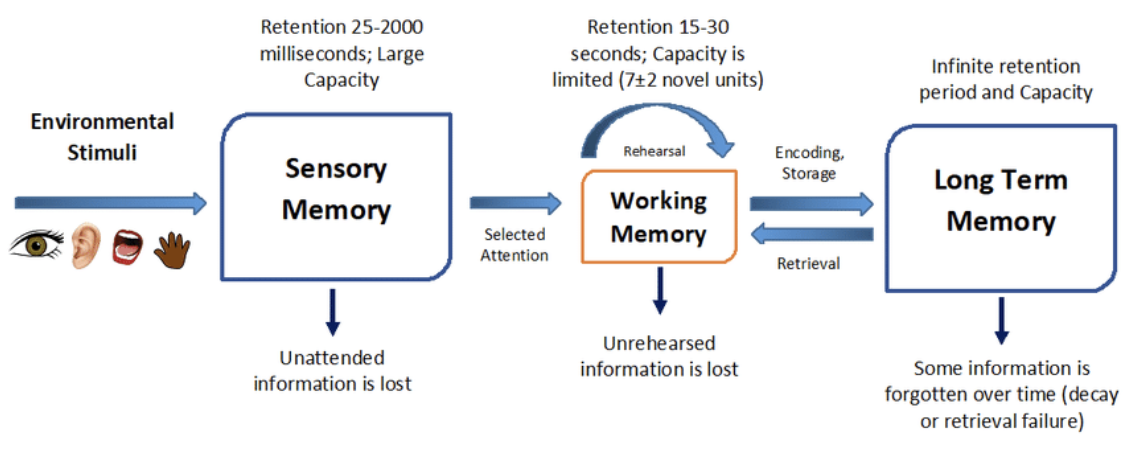

First slice, neuropsychological. We can draw analogies with how human memory works. The now-classic CoALA framework (Sumers et al., 2024) follows Tulving's classification and divides agent memory into four types:

- working memory, i.e., what is in the context right now: "we are currently working on this script";

- episodic memory, timestamped records of past episodes: "yesterday we talked about X";

- semantic memory, factual knowledge about the world: "the user's name is Alice, she works at Google";

- procedural memory, learned skills and behavioral patterns: "when the user asks about X, first check Y".

If you have ever studied psychology, you will recognize these as standard categories from cognitive science. They map nicely onto what an AI agent needs: a scratchpad for the current task (working memory), a diary of what happened before (episodic), a knowledge base of facts (semantic), and a playbook of learned strategies (procedural). The classification is useful for understanding what a memory system should store, but it says nothing about how.

Second slice, operational. Alternatively, we can build a taxonomy around the atomic operations a memory system must support. Nearly all modern systems implement three core operations:

- formation / retain: how information enters memory: fact extraction, normalization, compression, indexing;

- retrieval / recall: how memory is returned on a query: embedding similarity, full-text search, graph traversal, temporal filters, reranking;

- management / reflect: how memory evolves when new data arrives: consolidation, updates, conflict resolution, forgetting.

All leading surveys agree that the hardest, least-solved problems live in the third bucket. The RAG industry already does retrieval quite well; formation is largely an engineering exercise; but conflict resolution, temporal reasoning, selective forgetting, and knowledge updates are still on the frontier.

Slice three, methodological. Neither of the above tells you how specific systems actually work. So let me offer my own rough classification by dominant approach. In many categories there is really just one prominent example, but that also gives us a natural outline for the rest of this post:

- Store-first + vector search: save everything verbatim, search by similarity. Here we have MemPalace and some variants of Supermemory.

- Extract-and-update: an LLM extracts facts from conversation, stores them in a database, updates on conflict; Mem0.

- OS-like hierarchy: memory is split into levels with explicit tool calls to move data between them; MemGPT / Letta.

- Temporal knowledge graphs: entities, relationships, explicit temporal structure; Zep / Graphiti.

- Self-editing notes: atomic notes that get rewritten retroactively when new information arrives; A-MEM.

- Verbal RL / reflection: verbal self-analysis as a training signal, without actual gradient-based weight updates; Reflexion, reflections in Generative Agents.

- RL-trained memory management: learning an add/update/delete policy via reinforcement learning on downstream tasks, Memory-R1.

- Multi-strategy parallel retrieval + fusion: memory uses several retrieval strategies in parallel, then merges results via reranking; Hindsight, and partly Graphiti.

- Graph-based associative recall: personalized PageRank or similar over a knowledge graph; HippoRAG.

- Bio-inspired consolidation: memory management methods inspired by human memory models: multi-stage consolidation, "sleep", gating etc.; LightMem, SleepGate, EverMemOS.

- Compression-first: instead of retrieval, try to compress long context into dense observations; Mastra Observational Memory.

With the broad strokes done, let us walk through the key systems in each category.

The Three Pillars of Agent Memory

Generative Agents: A Weighted Stream

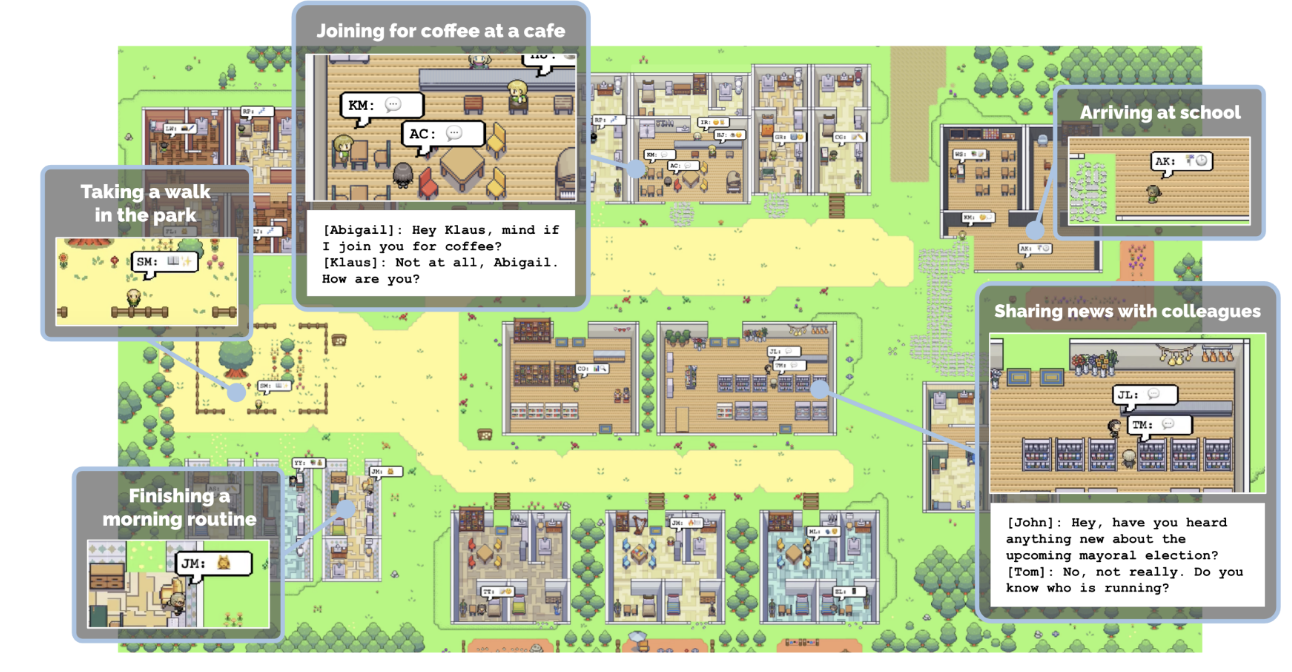

The one truly foundational paper for agentic memory is Generative Agents: Interactive Simulacra of Human Behavior (Park et al., 2023). In this famous work, the authors created 25 agents living in a simulated town called Smallville, where one of them organized a Valentine's Day party.

Many people remember this paper but usually summarize it vaguely ("agents interacted with each other"). What we are interested in now is the internal architecture.

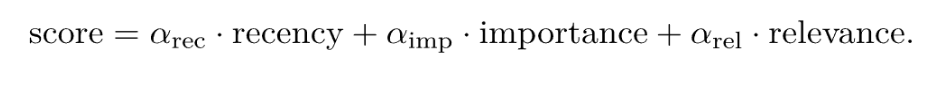

The memory stream. Memory in Park et al. is a simple memory stream, that is, an append-only log of natural language observations with timestamps. On each query, the agent ranks all memories by a linear combination of three normalized components:

Let us unpack each one.

Recency is an exponential decay with factor 0.995 per game-hour since the memory was last accessed. So memories persist for a long time but slowly fade, and every access "refreshes" them. This is strikingly similar to how human declarative memory works: a memory you revisit frequently stays vivid, while one you never think about gradually dims.

Importance is the most interesting component. It is assigned by the LLM itself. When a memory is recorded, the model is asked a direct question: "On a scale of 1 to 10, where 1 is purely mundane (e.g., brushing teeth, making bed) and 10 is extremely poignant (e.g., a breakup, college acceptance), rate the likely poignancy of the following piece of memory". The authors give concrete examples: "cleaned the room" get a 2, while "asked out a long-time crush" gets an 8. It is a very human-centric approach, and it turns out that this crude scale is already very useful to keep memory from being flooded with routine.

Relevance is cosine similarity between the query embedding and the memory embedding; this is standard semantic search.

Interestingly, in the official implementation all three weights α are set to 1, that is, there is no actual weighting. But even without learned coefficients, this turned out to be a solid method that is still used as a baseline.

Reflection. The second important contribution of Park et al. is the reflection mechanism. Periodically, when the cumulative importance of recently added memories exceeds a threshold, the agent generates three "important" questions about its recent experience, retrieves relevant memories for each, and asks the LLM to synthesize a higher-level insight. The result is written back into the memory stream with links to the child memories it came from. Over time, this produces a tree: raw observations at the base, reflections above them, meta-reflections above those, and so on.

This is the substrate of memory in this system: a structure where meaning gradually crystallizes from raw observations, much like how a human might accumulate a lot of individual experiences and then one day realize, "Oh, I think my colleague is actually unhappy at work" without being able to point to any single conversation that told them so.

Why am I spending so much time on a paper from 2023? Because it is an archetype. Almost everything that came later in agent memory either refines some components of this formula or replaces them something more complex. The overall template, however, remains the same.

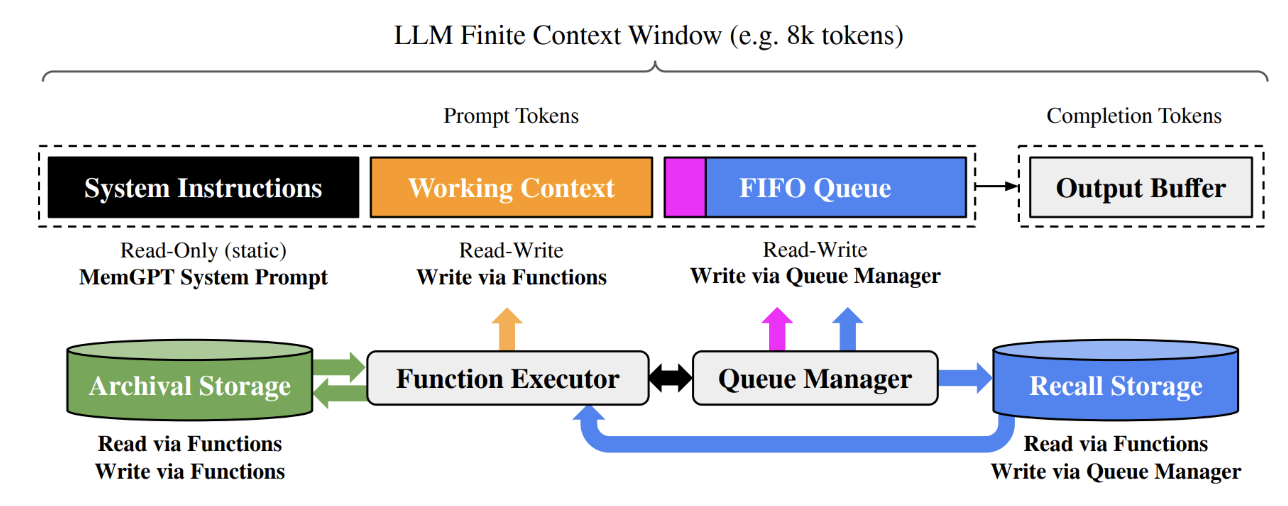

MemGPT / Letta: Memory as an Operating System

The next landmark paper appeared about six months later: MemGPT: Towards LLMs as Operating Systems (Packer et al., 2023). The idea is to look at LLM memory the way we look at memory in an operating system: an OS also has limited RAM, and there are well-developed mechanisms for hierarchical storage, swapping, and so on — maybe we can transfer these ideas to LLMs?

If you have ever wondered why your computer can handle having dozens of browser tabs open when it only has 16 GB of RAM, the answer is virtual memory: the OS seamlessly shuffles data between fast RAM and slower disk storage, creating the illusion of nearly unlimited memory. MemGPT does essentially the same thing for an LLM's context window. The context window is the "RAM", which is small and fast but limited. Everything that does not fit gets pushed to "disk" (external storage), and the agent explicitly decides what to swap in and out.

MemGPT has two levels. The main context is everything actually in the prompt right now: system instructions (read-only), a working context (a fixed-size read/write block that the LLM edits via explicit tool calls, that is, essentially the agent's persona and key facts about the user), and a FIFO queue (a sliding window of recent messages with recursive summarization of whatever gets pushed out).

The external context (not in the prompt) contains the recall storage (a full message log, searchable) and archival storage (a read/write text store where anything can be placed).

The crucial part is how the agent uses all this. There is a set of tool calls: core_memory_append, core_memory_replace, archival_memory_insert, archival_memory_search, plus search over recall storage. The LLM itself decides when to read or write, using this API to manage its own memory.

MemGPT also introduced memory pressure warnings. When tokens in the main context cross a warning threshold, the system injects a service message like “you are running low on space, time to move something to the archive”. This is analogous to a page fault, and the agent has to respond, just like a process in an OS responds to a SIGSEGV. On such a warning, the agent evicts roughly half its context window and replaces it with a recursive summary. Remarkably, even in 2023 no fine-tuning was needed for this, as GPT-4 was able to handle it on prompting alone.

Conceptually, MemGPT was the first to implement the principle that the agent manages its own memory. Before it, memory was passive, that is, some external engine wrote and read things, and the agent just consumed the results. This idea became the standard approach and has been repeated in a hundred different forms since.

Today MemGPT lives on as the framework Letta, where the same principles are implemented in a more modern form.

Reflexion: Verbal Reinforcement Learning

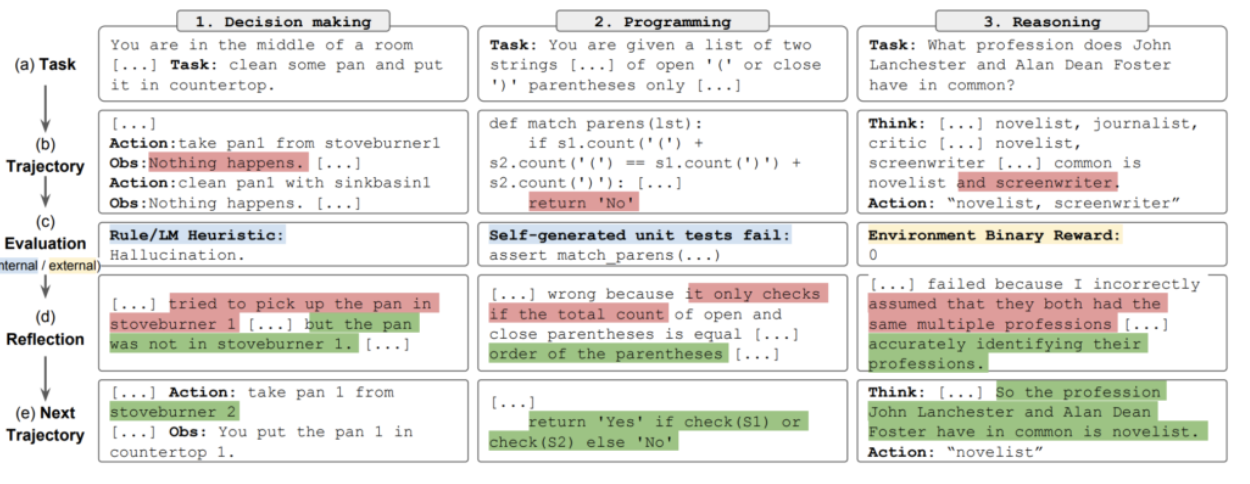

The third pillar is "Reflexion: Language Agents with Verbal Reinforcement Learning" (Shinn et al., 2023). Despite the name, there is still no actual fine-tuning here, but there is a clever imitation of it.

Suppose an agent was performing a task, made a mistake, and got negative feedback. In classical reinforcement learning, we would compute a gradient, update the policy's weights, and run the next episode. The idea of Reflexion is to instead ask the LLM to simply *write out what went wrong*, and then attach that text to the prompt on the next attempt. The entire "gradient step" happens inside the context window, and the model learns without touching a single parameter.

Imagine a chess player who, after losing a game, writes a note to themselves: "I lost because I left my queen undefended on move 14. Next time, always check the queen’s safety before advancing pawns". Then they tape that note to their monitor before the next game. That is Reflexion. The "note" is what the authors call a semantic gradient: a specific direction for improvement, expressed in words rather than numbers.

The architecture has three modules: an actor (the LLM generating actions, usually in ReAct style), an evaluator (assessing the trajectory — exact match, heuristics, or LLM-as-a-judge), and a self-reflection module (a separate LLM call that takes the full trajectory and the reward and writes a postmortem: "I made a mistake here because X; next time I should first do Y").

These postmortems are stored in a long-term memory buffer, limited to 1-3 entries, which get attached to the actor's prompt on the next iteration. There is one counterintuitive finding here: more reflections are not better. The 1-3 entry limit is not a technical constraint, but with a larger buffer, the agent drowns in contradictory advice and performance drops. This is an idea we will return to: compressing memory is often more important than accumulating it.

Graphs and Neuroscience: Giving a Language Model a Hippocampus

HippoRAG

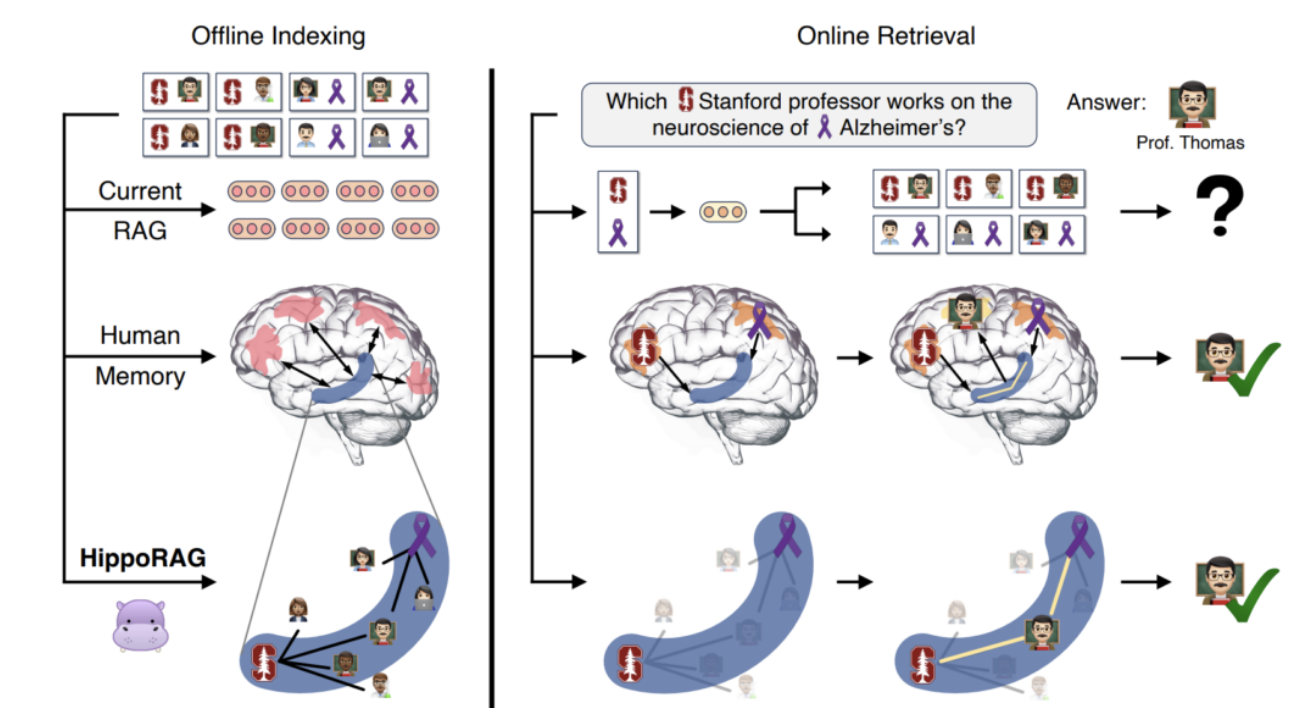

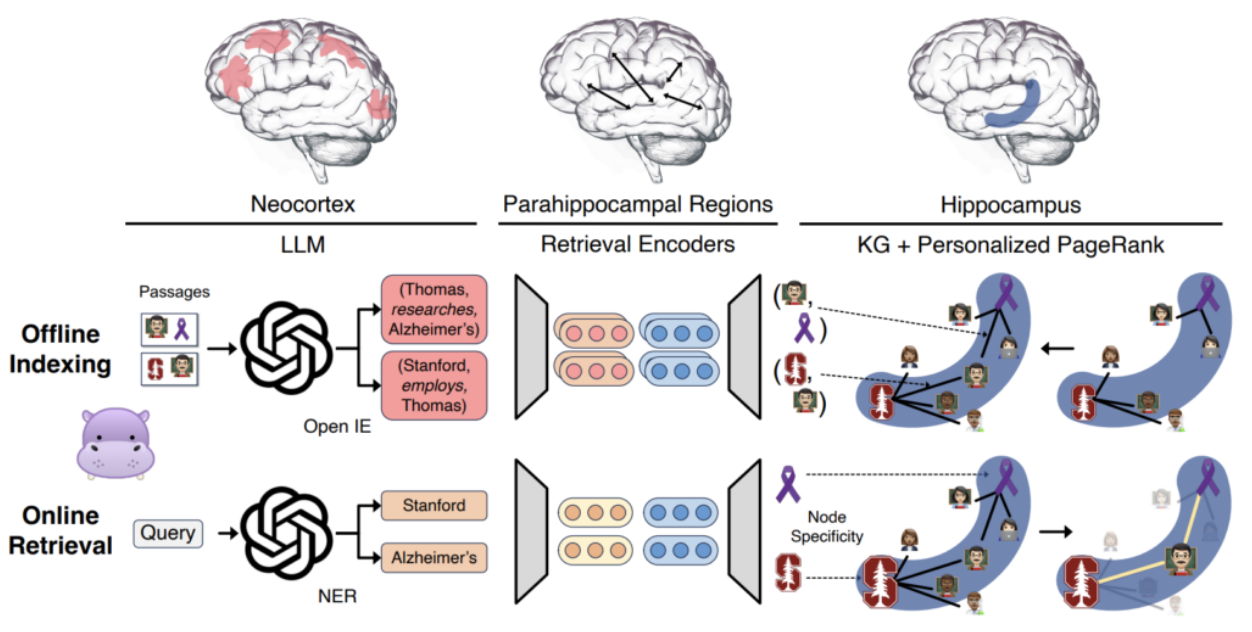

Alongside the simple idea of memory as a text log, there has been a parallel line of research on memory as a graph. One of the most elegant works in this direction is “HippoRAG: Neurobiologically Inspired Long-Term Memory for LLMs" (Gutiérrez et al., 2024).

The idea is right there in the name: let us look at how long-term memory works in humans (as far as we understand it) and try to reproduce the architecture. In simplified form, our memory system looks like this:

- the neocortex processes sensory information and extracts "concepts";

- the parahippocampal region detects similarity between these concepts;

- the hippocampus indexes everything as an associative graph — a network of links between concepts and memory entries.

This translates nicely into RAG. The neocortex is an LLM doing Open Information Extraction (breaking passages into subject–predicate–object triples). The parahippocampal region is a retrieval encoder that computes cosine similarity between entity embeddings and adds "synonymy edges" between those above a threshold — automatically merging synonymous concepts without explicit entity resolution. The hippocampus is the resulting knowledge graph.

When a query comes in, HippoRAG does not walk the graph step by step using LLM calls (that would be slow and expensive). Instead, it extracts named entities from the query, maps them to graph nodes as "entry points", and runs Personalized PageRank (PPR), the same algorithm Google originally used for web search, but starting from the query's entity nodes rather than from a uniform distribution. The result is a probability distribution over all nodes, which provides the ranking.

If you have never encountered PageRank: imagine you are lost in a city and you start wandering randomly from intersection to intersection, following roads at random. After a long time, the intersections you visit most frequently are the most "important": they are well-connected hubs. Personalized PageRank is the same idea, except instead of starting from a random intersection, you always start from specific places (the entities mentioned in your query). The nodes you visit most frequently from those starting points are the most relevant memories.

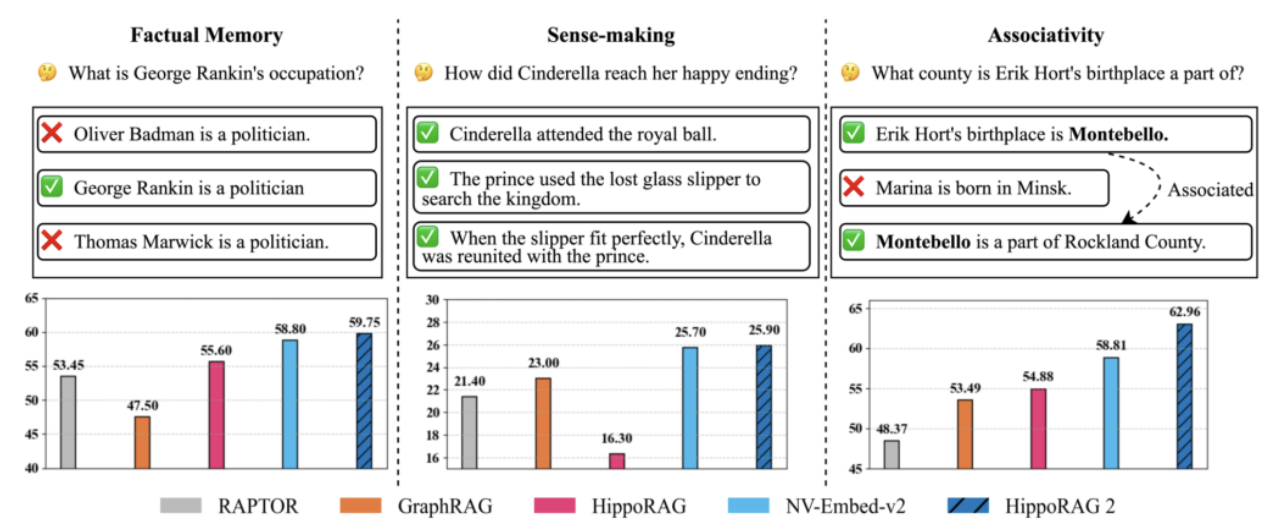

This turned out to be a very cheap and fast method compared to RAG approaches that make multiple LLM calls, and it simply produced better results than standard one-shot RAG.

In HippoRAG 2 (Gutiérrez et al., 2025), the authors addressed the main weakness of the first version: on simple factoid questions, HippoRAG sometimes lost to vanilla RAG because the graph diffusion blurred simple cases. The second version adds better passage integration, which helped with associative linking.

The catch is that many errors now come from NER / entity extraction — not from the graph search itself, but from the LLM failing to correctly extract entities from the text. In essence, HippoRAG handles the *memory retrieval* beautifully; the bottleneck is *building the graph* in the first place.

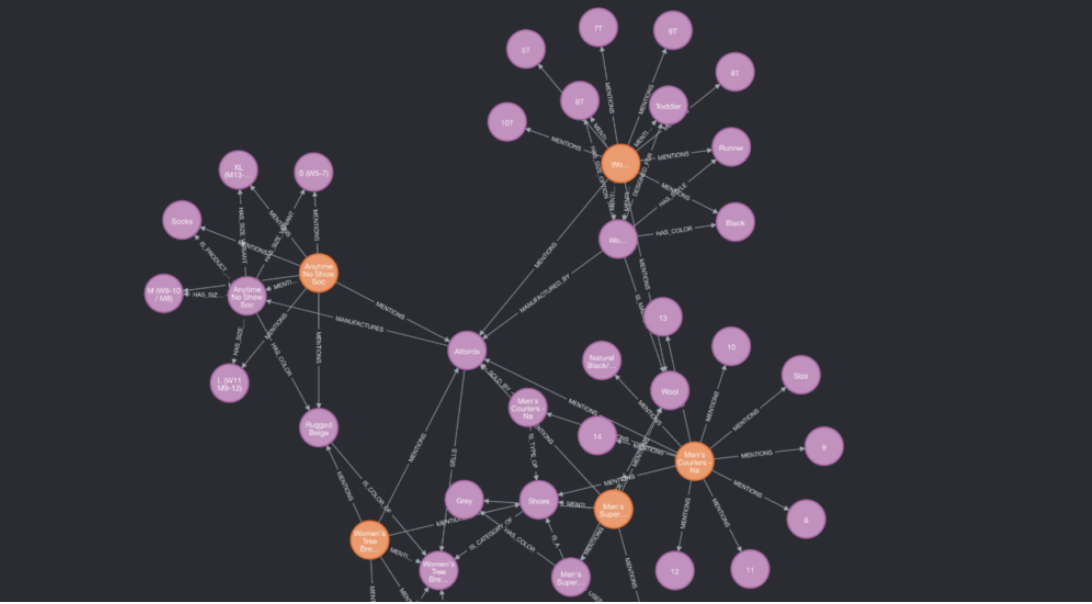

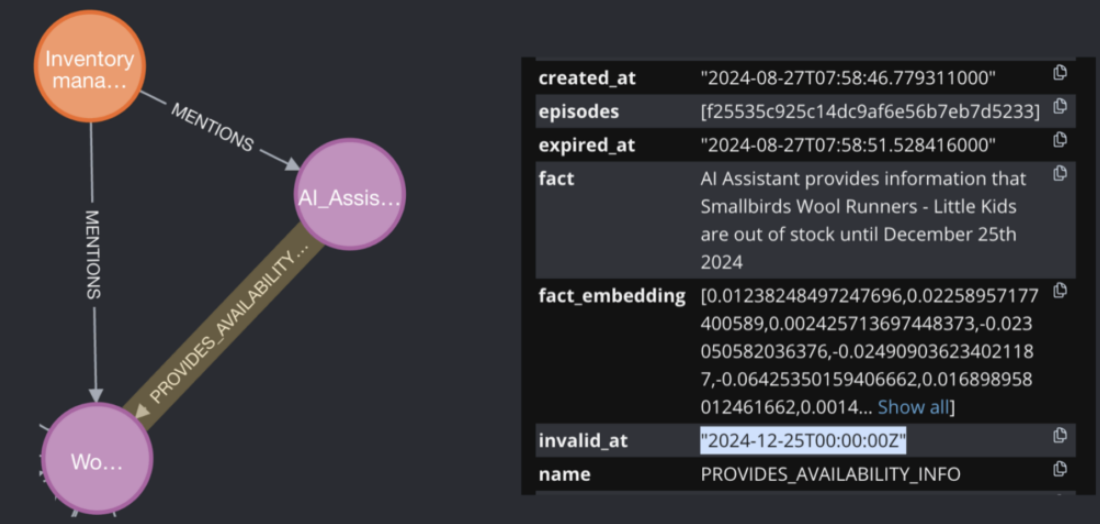

Zep / Graphiti: Time-Aware Graphs

A similar idea has been brought to production by Zep and its memory engine Graphiti (Rasmussen et al., 2025). The key innovation is a bi-temporal knowledge graph, where each fact carries two timestamps: valid_at (when the fact became true) and invalid_at (when it stopped being true).

If in January the user said "I live in Berlin" and in March said "I moved to Lisbon," the first fact is not deleted — it just gets invalid_at = March. And if you ask "Where did the user live in February?" the system returns the correct answer.

Think of it like version control for facts. In Git, you do not delete old code when you write new code; you keep the full history, and you can always check out any point in time. Graphiti does the same thing for knowledge: every fact has a validity window, and the system can answer questions about any moment in the past, not just the present.

Graphiti also combines semantic search over embeddings, traditional BM25 keyword search, and graph traversal, with extraction and indexing happening asynchronously in the background. It is currently one of the most mature and popular solutions for long-term memory with temporal logic.

Mutable Memory: Rewriting Your Own Memories

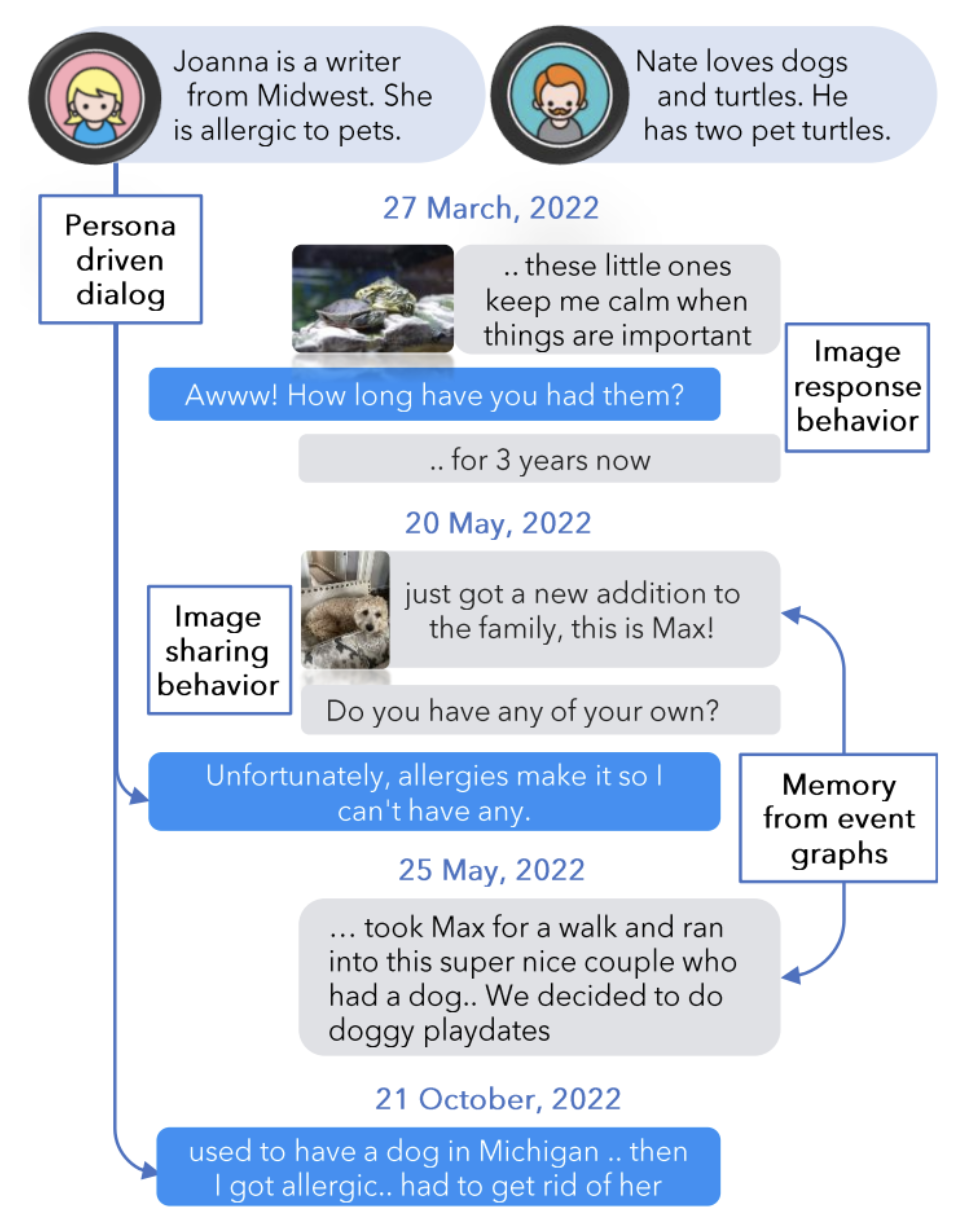

Another interesting architectural turn is the idea that memory should be mutable. Most systems we have discussed only add new items, but human memory, as we have known for over a century, constantly reconsolidates — old memories are rewritten under the influence of new ones. Here is an illustration from Xu et al. (2025):

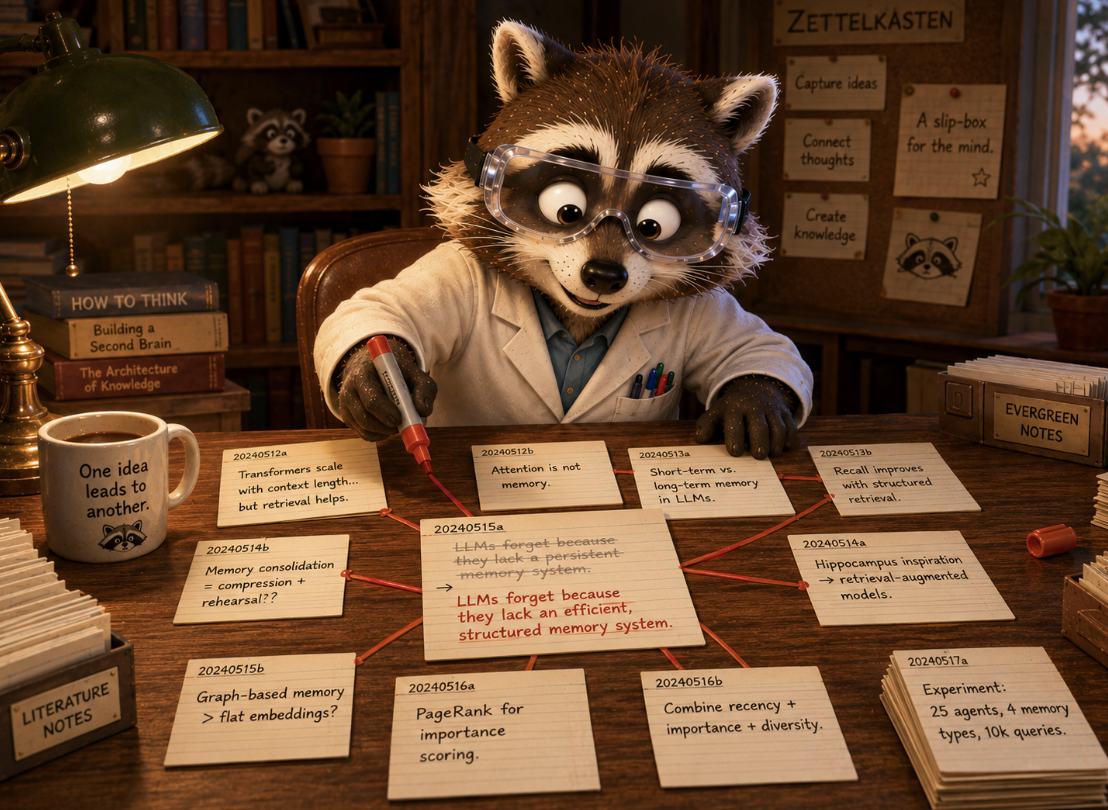

A-MEM: A Zettelkasten for Models

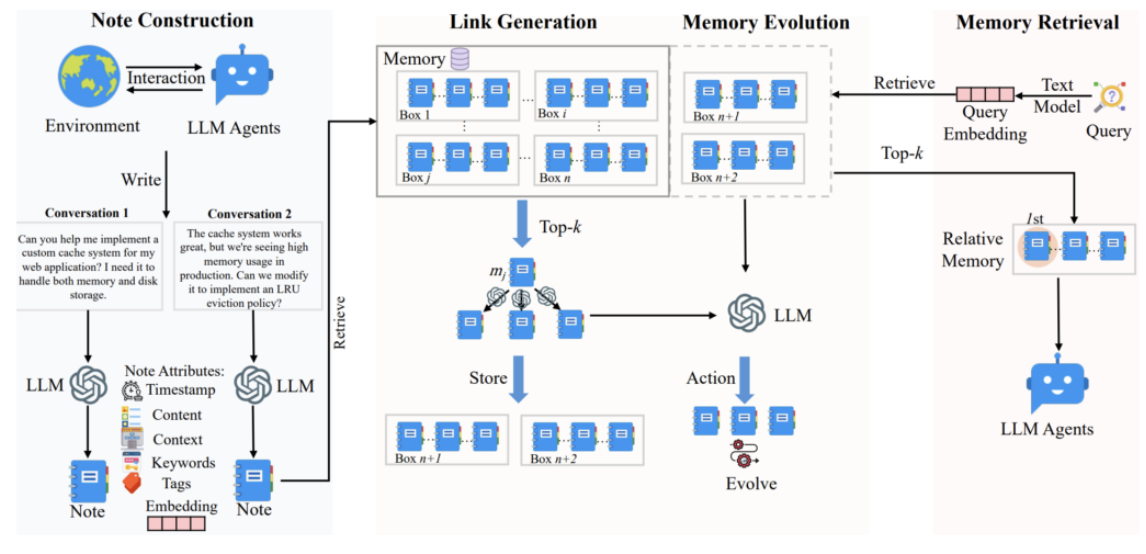

A-MEM (Xu et al., 2025) takes the Zettelkasten method as its foundation. It is a system of index cards invented by the German sociologist Niklas Luhmann in the 1970s and now beloved by Obsidian enthusiasts. The idea is that each memory is an atomic note that links to other notes, and the graph of these links is your external memory.

If you have ever used a note-taking app like Obsidian or Roam Research, you know the feeling: you write a note about an idea, link it to three related notes, and over time a web of connections emerges that is more useful than any single note on its own. A-MEM brings this concept to LLM agents.

Each note in A-MEM has seven fields: raw content, timestamp, keywords, tags, a contextual description (all generated by the LLM), a vector embedding, and links to related notes.

The killer feature is that when a new note is linked to an old one, the LLM is asked to update the old note's description, keywords, and tags in light of the new information. Literally: "Here is old note X, here is new note Y that is related to it; now rewrite X so that it accounts for what Y told you". The authors call this memory evolution: old memories evolve as new ones accumulate — much like how your understanding of a friend changes over years of knowing them, even if no single conversation dramatically altered your view.

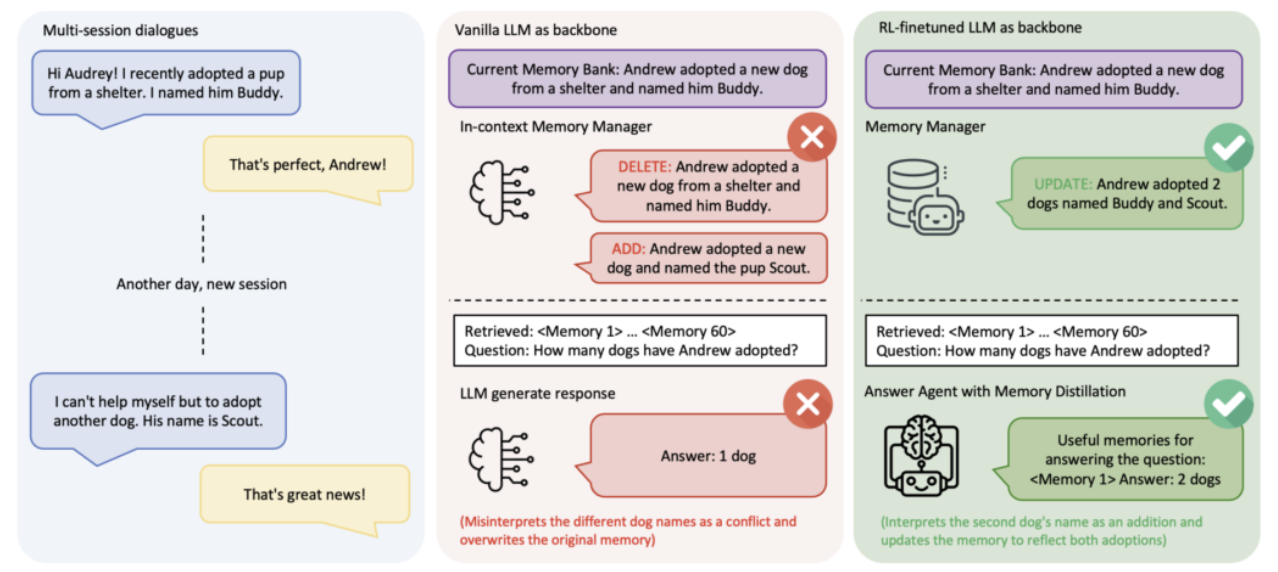

Memory-R1: Add/Update/Delete via RL

Our next system finally takes the step of actually training something. Not the LLM itself, however, but a specialized memory management agent.

Memory-R1 (Yan et al., 2025) does not hand-write rules for memory updates and does not leave it up to a standard LLM either — it learns the rules through reinforcement learning.

The agent chooses one of four actions for each new piece of information: ADD, UPDATE, DELETE, NOOP.

The reward comes from the agent's final answers on downstream tasks. There are no direct labels for "which operation is correct" — we learn purely from outcomes: if the generated answer turned out to be right, the policy gets rewarded.

The training uses PPO and GRPO (Group Relative Policy Optimization — the algorithm originally proposed by DeepSeek). And the especially remarkable thing is the training set size: just 152 QA pairs. This tiny dataset is enough to get substantial improvements over prior solutions like MemoryOS.

Memory-R1 is, in my view, one of the most promising directions: it honestly acknowledges that memory operations are a hard decision problem that cannot always be solved by simple heuristics.

Open-Source Frameworks: What You Can Actually Use Today

Let us briefly survey what is available for production use.

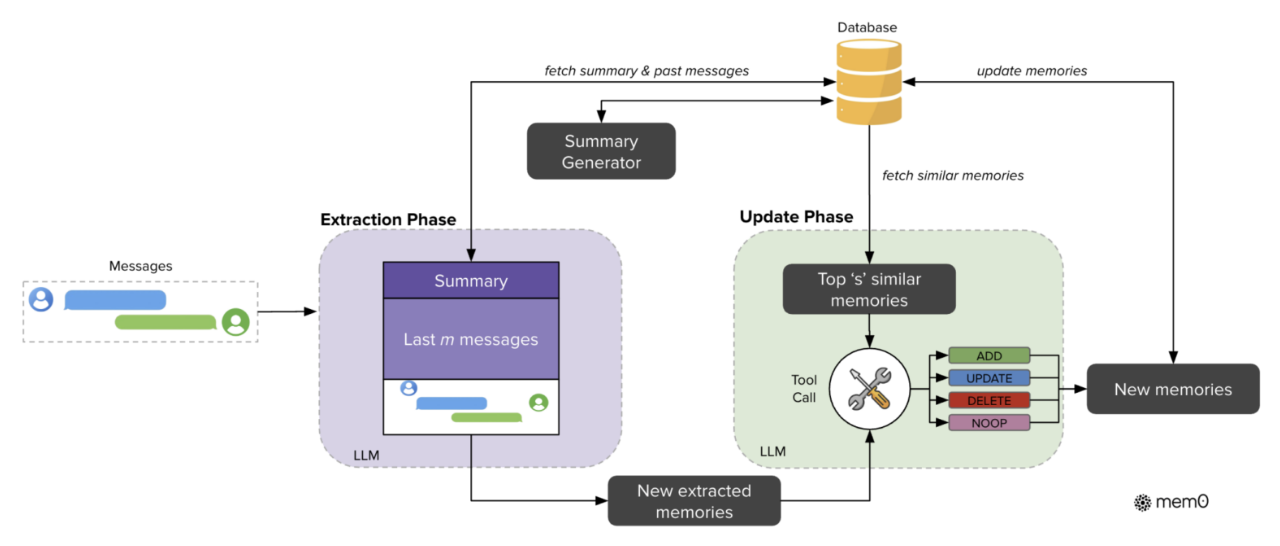

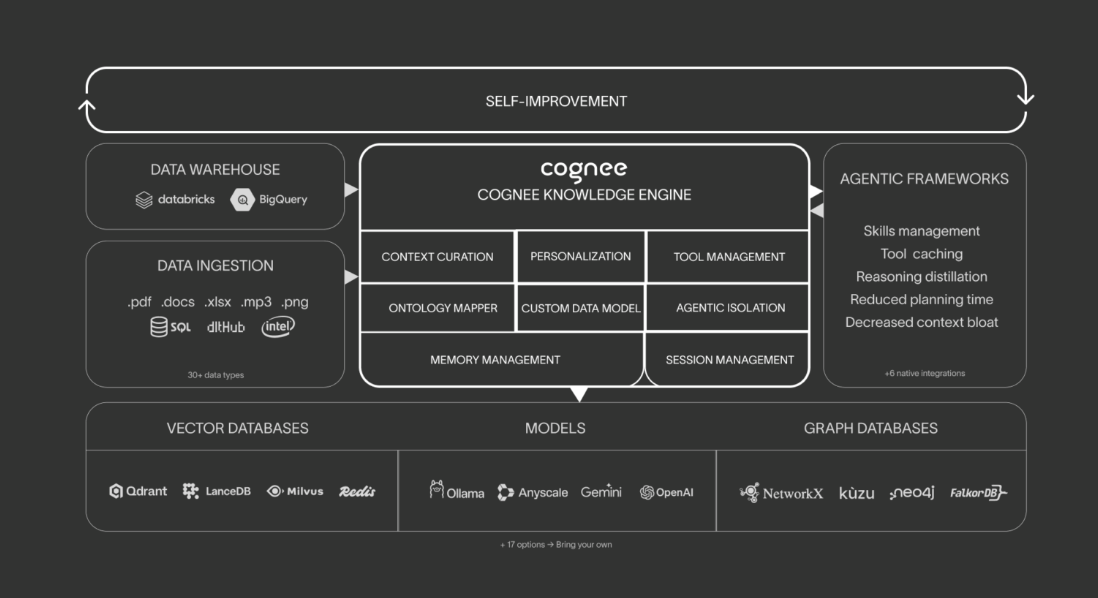

Mem0 (Chhikara et al., 2025) is probably the GitHub star champion among memory ecosystems. Under the hood it runs Qdrant/Chroma/PGVector for semantic search, a key-value store for structured queries, optionally Neo4j for graph storage. When you call `add()`, the LLM extracts facts and preferences, deduplicates, and updates on conflict. When you call `search()`, everything is ranked by relevance/importance/recency.

The main virtue is an extremely simple API; it takes literally one line to add memory, and Mem0 is still a very strong baseline.

Letta (the production reincarnation of MemGPT) and Zep / Graphiti are also popular in practice, but I have already covered them above.

LangChain has a module called LangMem with a very simple implementation of procedural memory: the agent rewrites its own system prompt as it learns.

LlamaIndex has composable memory blocks with a priority token budget.

CrewAI has multi-agent shared memory with explicit recency/similarity/importance weights, reminiscent of Park et al. (2023). And Cognee is a standalone memory library with an "Extract → Cognify → Load" pipeline that can plug into anything, including Claude Code.

Overall, if you are doing LLM-based agents in any shape or form, you are probably just a few lines of code away from adding a memory engine to the agents.

As for the big three, over 2025, OpenAI, Anthropic, and Google all added persistent memory with import/export. Their approaches differ philosophically: ChatGPT and Gemini store memory transparently and use it automatically, while Claude starts each conversation from a blank slate and loads memory only via explicit, user-visible tool calls. Here’s a detailed description of how memory works for Claude.

What Is Happening on the Frontier

In the past year, agent memory has been developing rapidly. A detailed survey would take dozens upon dozens of pages, but let me offer a few highlights.

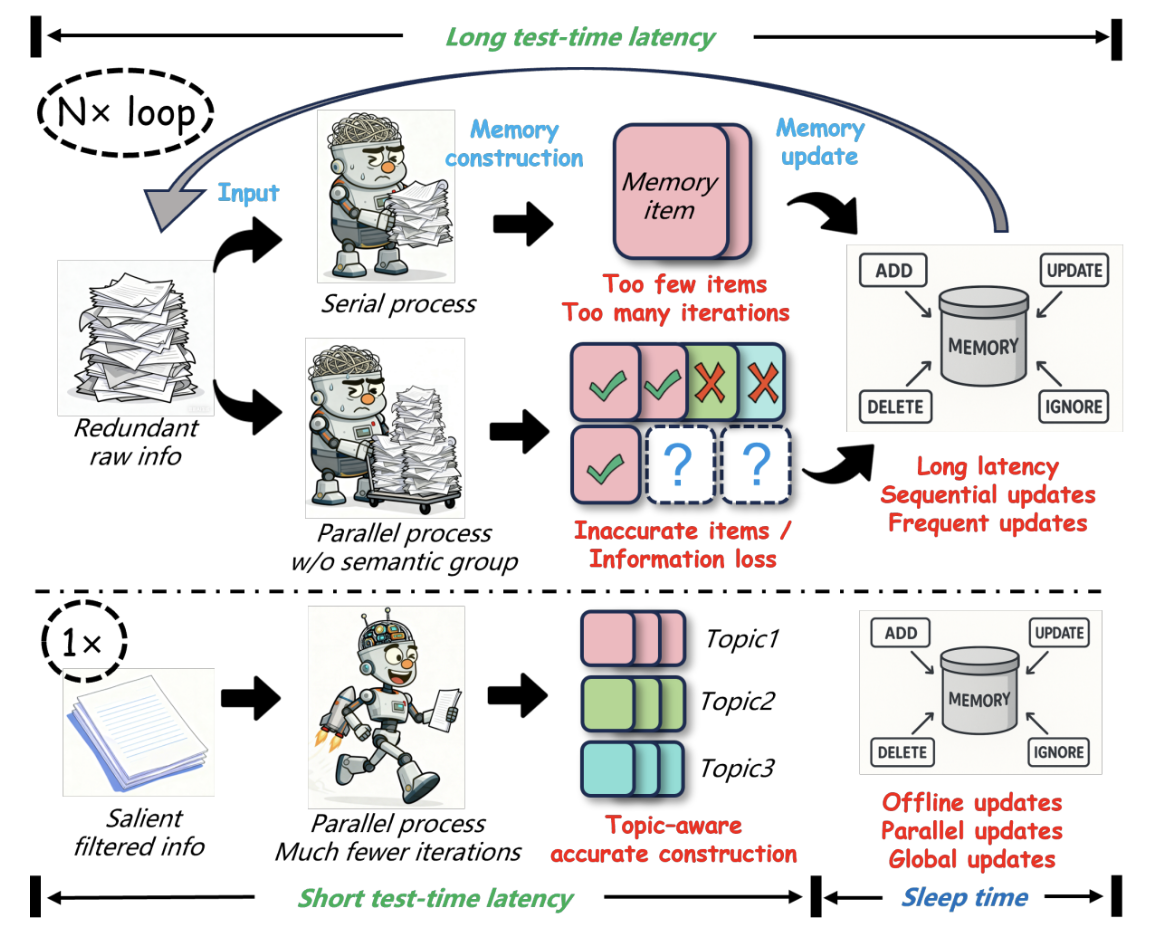

Sleep Emulation: Explicit Memory Consolidation

LightMem (Fang et al., 2025) builds an analog of the Atkinson–Shiffrin multi-store model of memory from the 1960s: sensory register → short-term store → long-term store.

The interesting part for us is the explicit "sleep" phase: memory consolidation is separated from memory use and happens in the background, between agent calls. The main motivation is to speed up inference, since the agent does not have to consolidate memory in real time.

You can think of this as the difference between studying and exam-taking. If you try to organize your notes during the exam, you will run out of time. But if you spent the night before sorting, summarizing, and connecting your notes (that is, "sleeping on it"), you walk into the exam with a clean, well-organized memory and can retrieve what you need much faster.

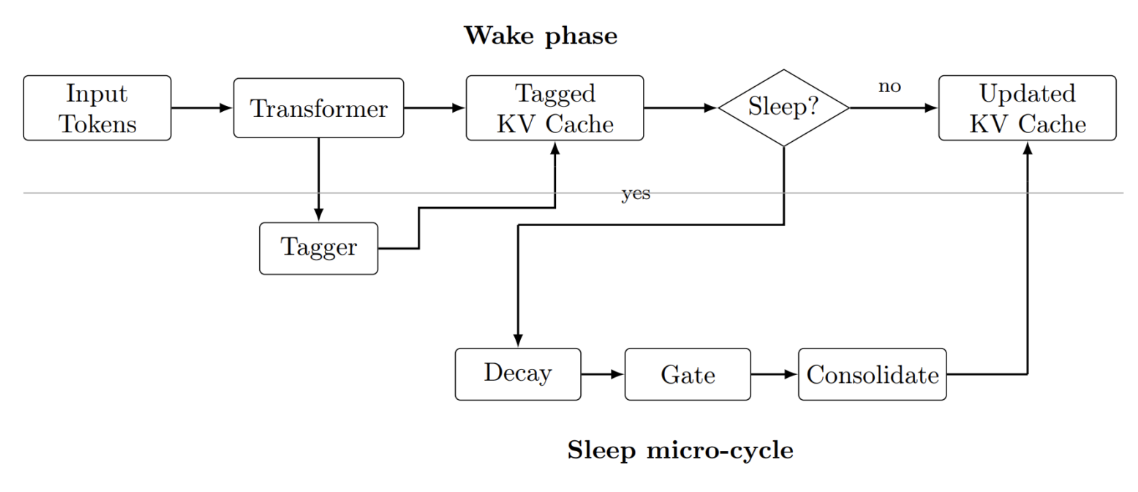

SleepGate (Xie, March 2026) fights proactive interference, the effect where accumulated stale information degrades retrieval quality. It is similar to LightMem in spirit, but more interesting mathematically: it updates the KV-cache directly.

New Memory Primitives

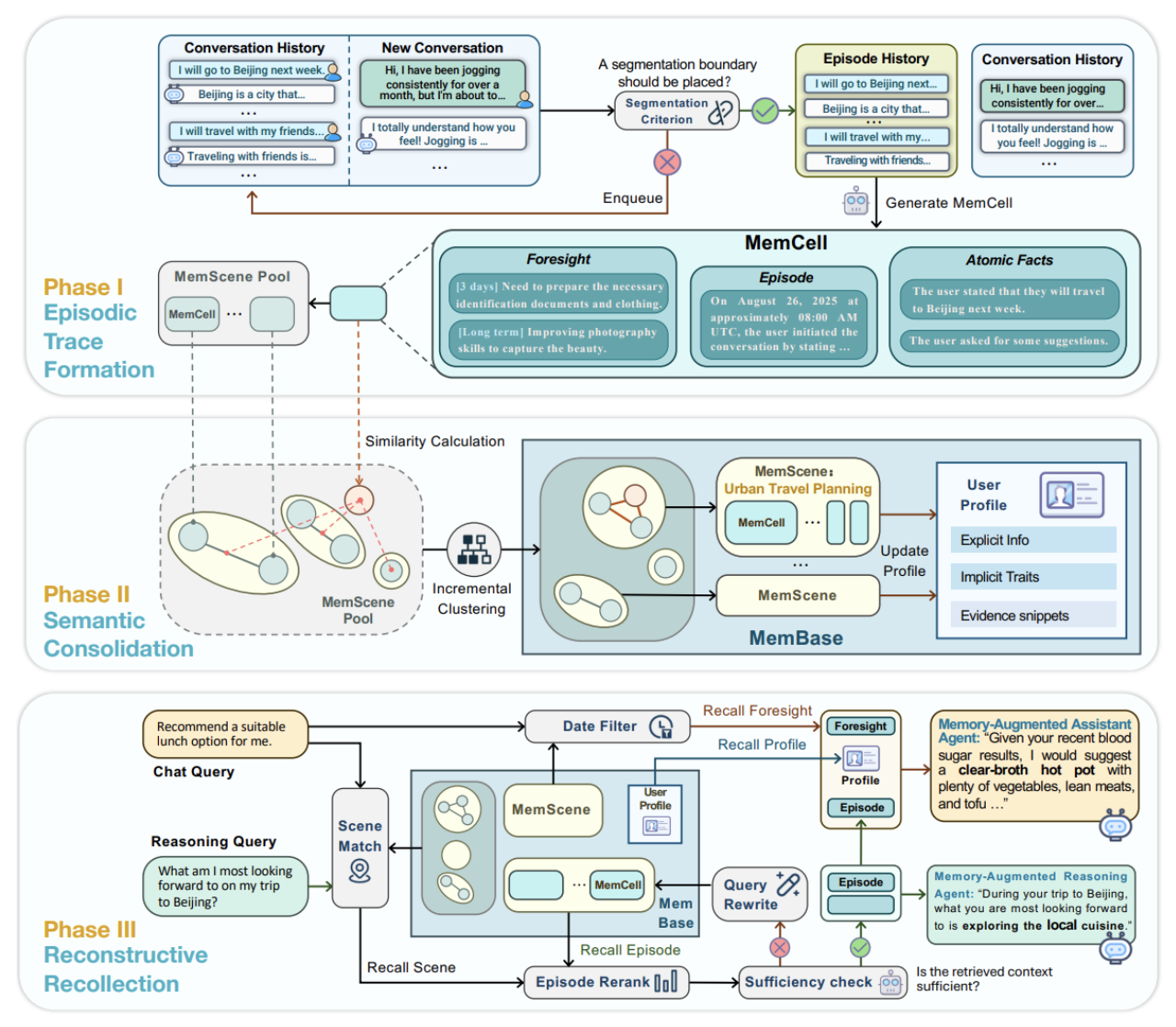

EverMemOS (Hu et al., January 2026) introduces a MemCell primitive — a standardized container into which facts, dialogue fragments, and anything else worth remembering get converted — and organizes MemCells into *MemScenes* for retrieval.

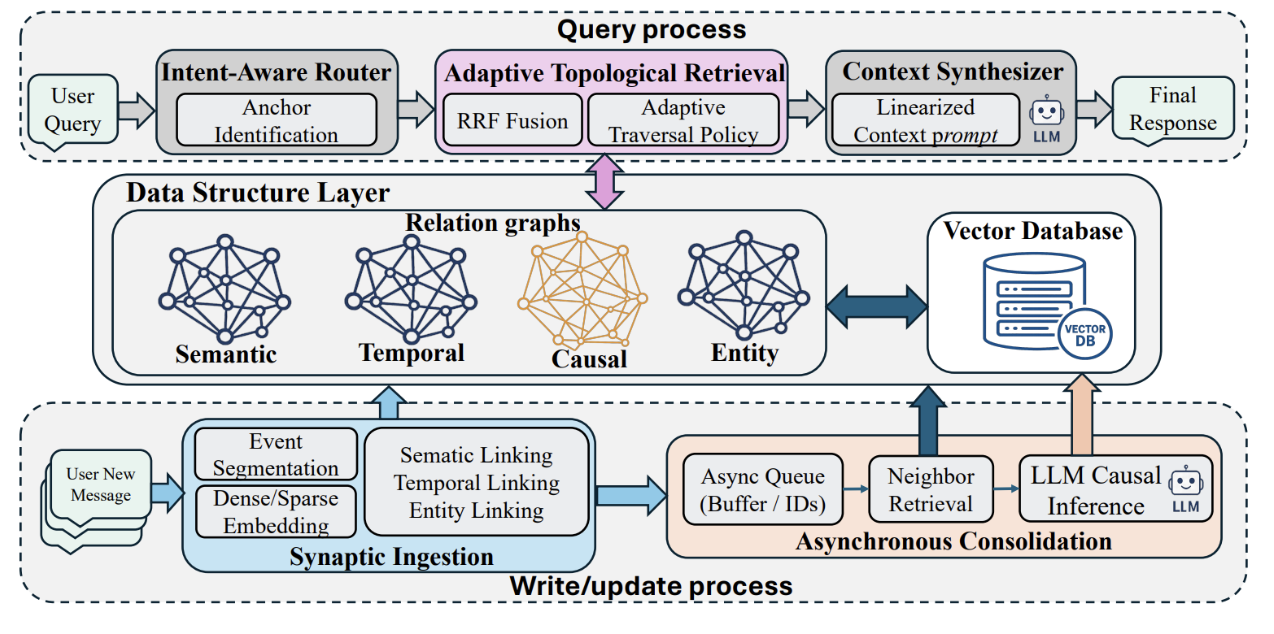

MAGMA (Jiang et al., January 2026) builds multi-relational subgraphs (entity, episodic, temporal, semantic) and adds a Temporal Inference Engine that normalizes temporal expressions into a chronological representation.

New Principles for Memory Management

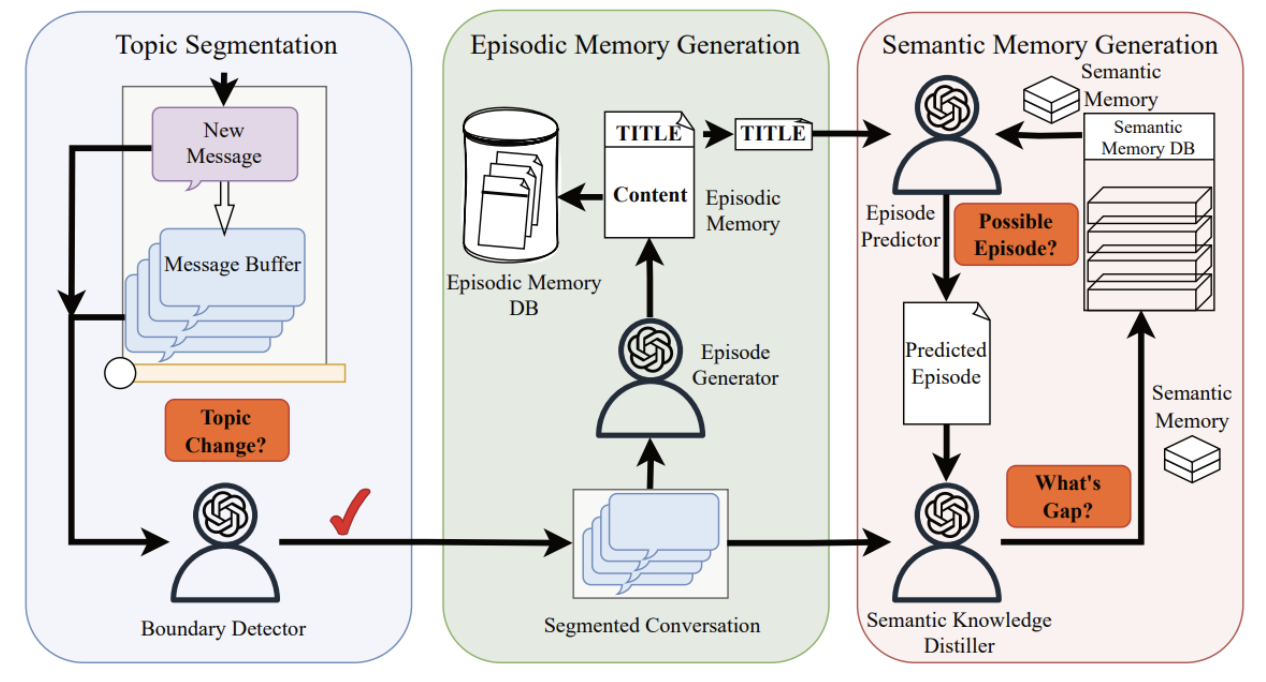

Nemori (Nan et al., 2025) applies the “free energy principle” from neuroscience: the agent predicts the content of an episode from its existing knowledge base, and the gap between prediction and reality determines what needs to be added to memory. In other words, new information enters memory only if it is a "surprise" relative to what is already known.

If you have ever noticed that you remember surprising or unexpected events much more vividly than routine ones—your first day at a new job, an unexpected compliment, an accident you witnessed—you have experienced this principle firsthand. Nemori formalizes this: memory is not a tape recorder that captures everything; it is a surprise detector that only records what does not match its predictions.

Benchmarks: How We Measure Memory

A few words about benchmarks, because when choosing a system, you need to understand what "system A gets 91% on X" actually means.

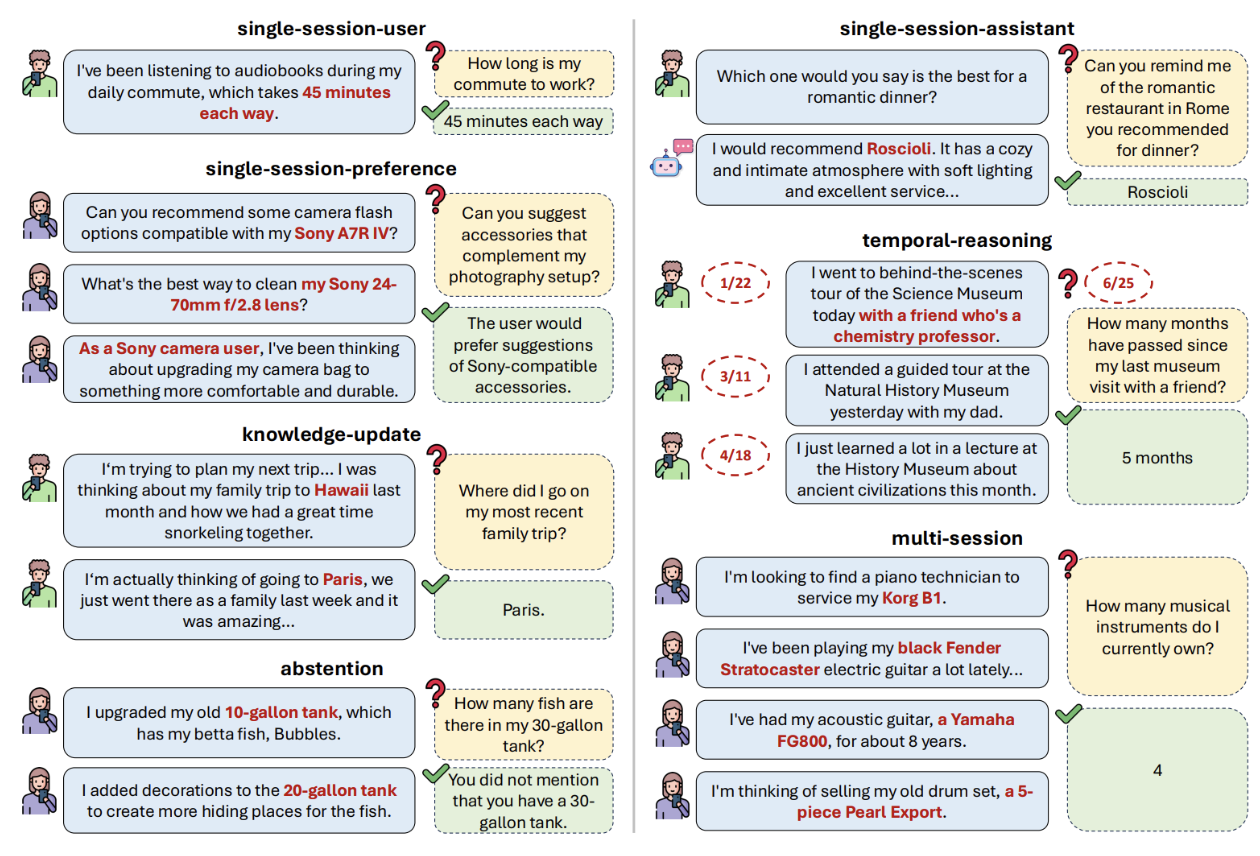

LoCoMo (Maharana et al., 2024) is probably the main dataset for memory evaluation: very long dialogues (300 turns, 35 sessions per dialogue), five question types (single-hop, multi-hop, temporal, commonsense, adversarial). LoCoMo became the de facto standard, but an important caveat: on LoCoMo, long-context models can partially solve the task without any external memory, just by stuffing everything into the context. So LoCoMo scores depend heavily on the base LLM.

LongMemEval (Wu et al., 2024) is harder: 500 questions testing five capabilities (information extraction, multi-session reasoning, temporal reasoning, knowledge updates, abstention). Even GPT-4o in full-context mode (~115K tokens) only manages 30–70%.

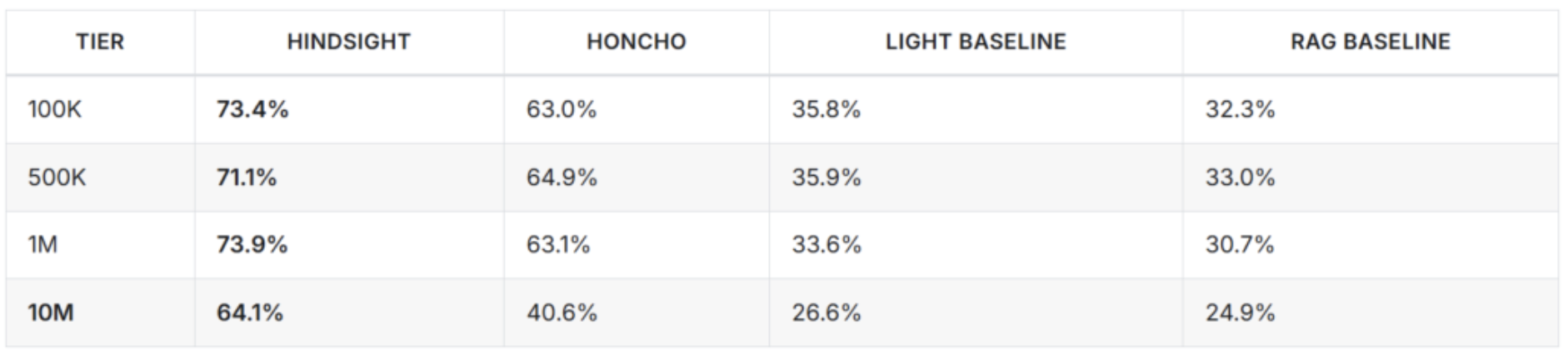

BEAM (Beyond a Million Tokens; Tavakoli et al., 2025) contains 2,000 validated questions over 10 million tokens of context, testing ten different capabilities including contradiction resolution and event ordering. At this scale, no base model works on its own, and you genuinely need a proper memory architecture. On BEAM, Hindsight currently leads with 64.1%, with the next entry at 40.6%. That is a very large gap.

MemoryArena (He et al., 2026) measures memory inside real agent tasks: web navigation, planning, sequential reasoning. As I mentioned, systems that score 95% on LoCoMo in pure recall drop to 40–60% when they need to actually use the recalled facts. I think MemoryArena will become the key benchmark for 2026, because it asks the right practical question.

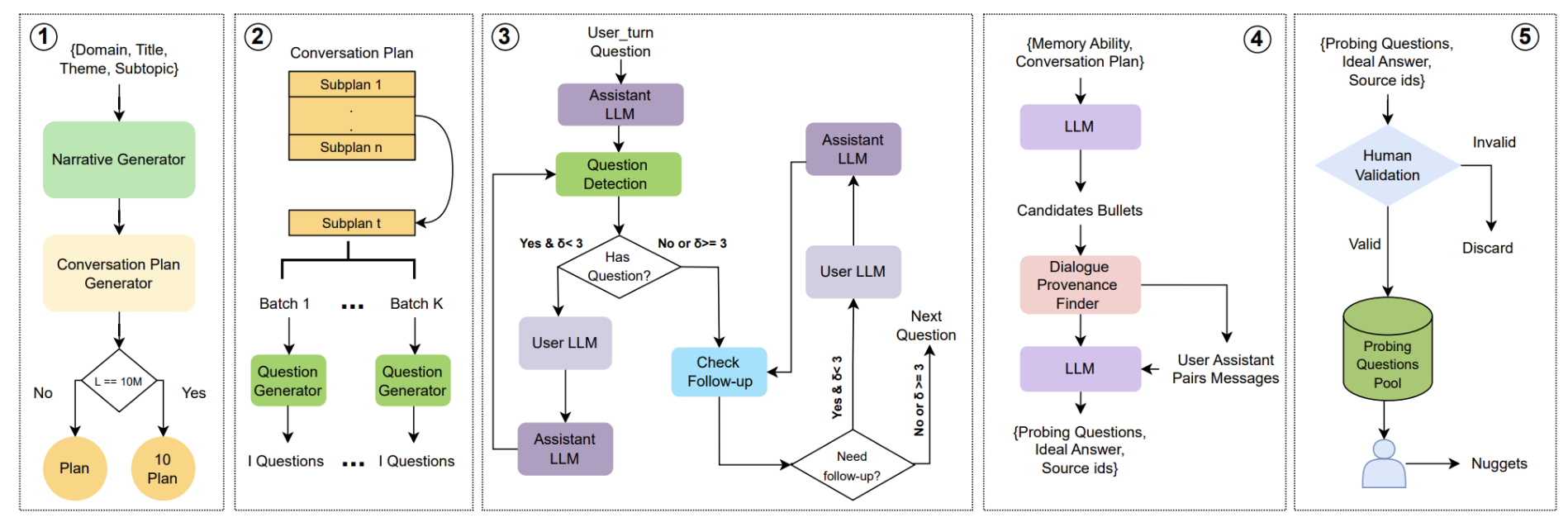

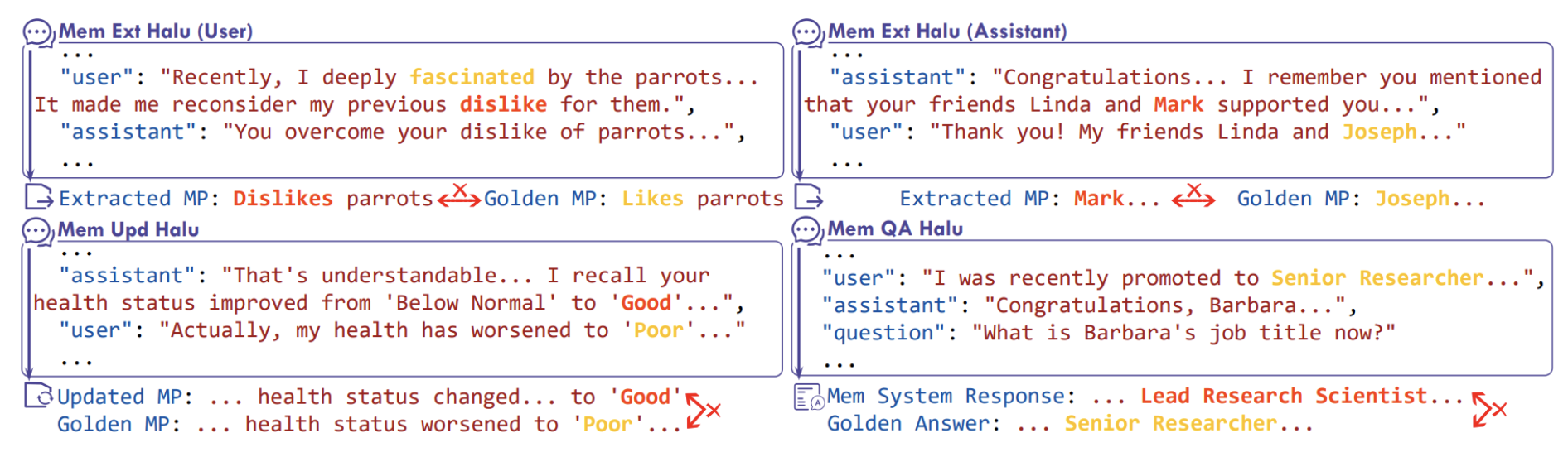

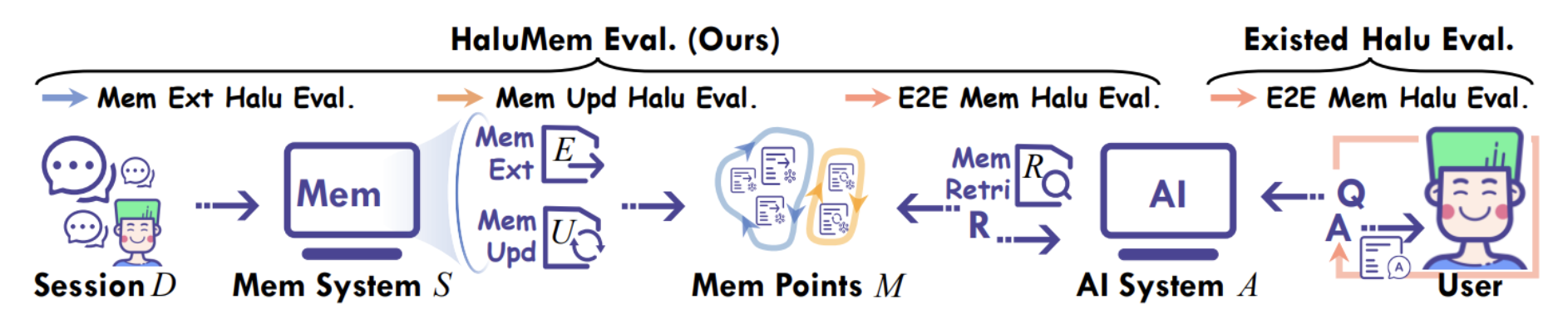

HaluMem (Chen et al., 2025) is a separate benchmark measuring hallucinations in memory operations, that is, when the LLM makes something up while extracting facts or generating what goes into memory.

This is especially important for systems that use LLMs to create memory entries, because if the LLM invents a fact during recording, that invented fact lives in the database forever.

MemPalace: So What Did Milla Jovovich Actually Build?

Now we arrive at the main news hook. MemPalace was made by Milla Jovovich together with Ben Sigman, her main technical co-author. The repository appeared on April 5, 2026, picked up about a thousand GitHub stars in a couple of days, and is being actively discussed in the industry.

MemPalace is based on the method of loci, a mnemonic technique going back to ancient Greece, used by orators: to memorize a long speech, "place" its fragments in rooms of an imaginary building, then mentally walk through the rooms and "pick up" each part. This is where the term "memory palace" comes from. Here is an illustration from Nicholas Rhodes’ blogpost about MemPalace:

If you have watched Sherlock (the BBC series), you have seen this technique dramatized: Sherlock retreats into his "mind palace" to retrieve information by mentally walking through rooms and hallways. MemPalace takes this metaphor literally and organizes agent memory as a spatial hierarchy:

- wings — top-level divisions, each dedicated to a person or project;

- rooms — rooms within a wing, dedicated to specific topics;

- halls — "corridors" shared across wings, with five fixed categories: facts, events, discoveries, preferences, advice;

- tunnels — cross-links when the same room exists in different wings;

- closets — abbreviated summaries pointing to originals;

- drawers — the original files themselves, stored in full and never summarized.

Organizationally, this is beautiful and very human. You can actually picture this structure, unlike a vector store with a million entries.

The second key feature is a zero-LLM write path: no LLM calls happen during memory storage; only deterministic rules are used. LLMs are invoked only at read time, for reranking and reflection.

The third interesting feature is AAAK compression, a special dialect that uses regular expressions to pack repeated entities into short codes and structural markers. The idea is that any modern LLM can read this compressed format without a decoder, and the compression is genuinely impressive — tens of times compared to plain text.

But then…

Upon careful analysis, it turned out that MemPalace does not really work as advertised. It claims 96.6% Recall@5 on LongMemEval in "raw verbatim, zero API calls" mode. That would be higher than all competitors. But in reality, that 96.6% comes from a mode where documents are stored verbatim in ChromaDB, retrieval is ChromaDB's default embedding search, and *none of the wings, rooms, halls, tunnels, or closets participate in retrieval at all*.

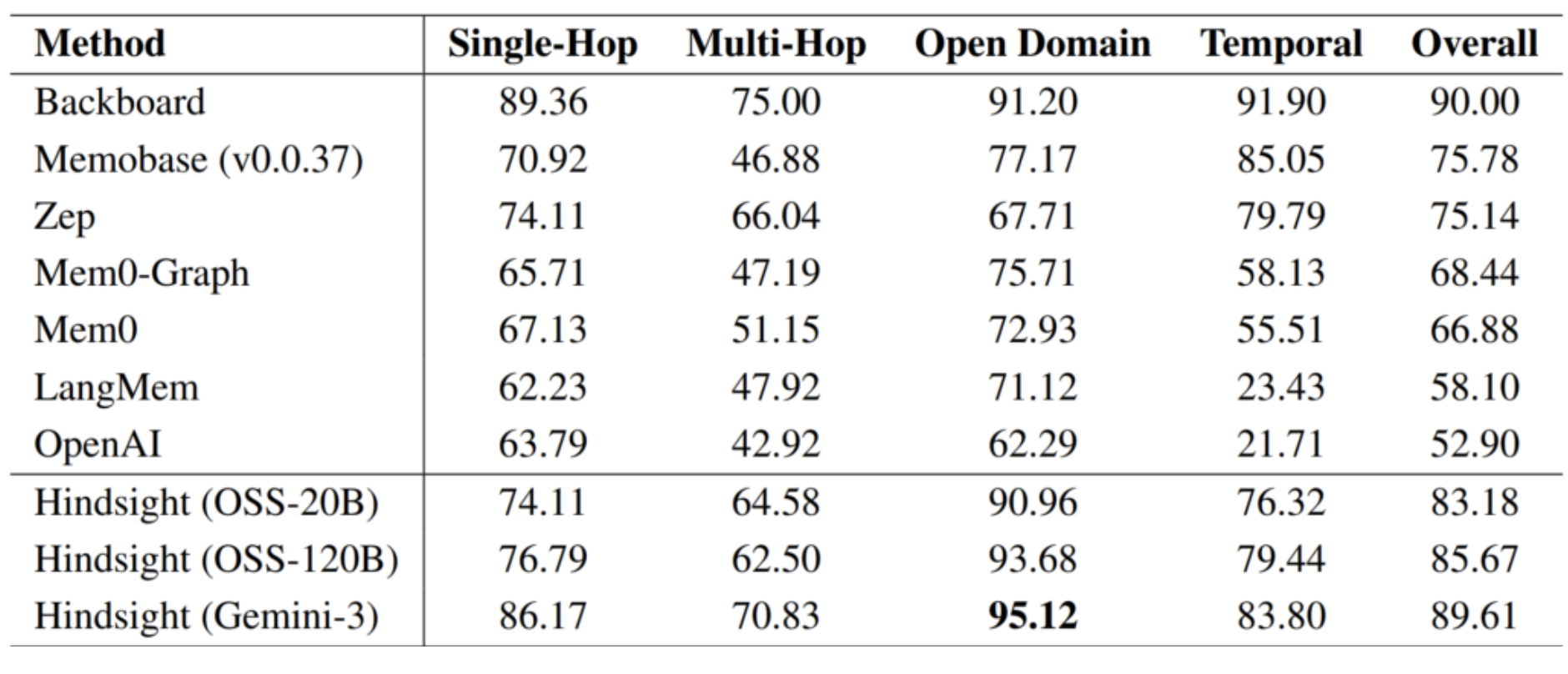

In other words, 96.6% is essentially the score of ChromaDB's default embeddings on LongMemEval, having nothing to do with the palace architecture. Moreover, when you actually turn on the memory palace mechanisms, the numbers go down. And on LoCoMo, where simple embeddings work worse, MemPalace gets 60.3% R@10, well below Hindsight (89.61%) and even MAGMA (70%).

To their credit, on April 7, 2026 the authors released a README update, signed "Milla Jovovich & Ben Sigman", acknowledging several errors and pledging to fix them.

The concept of spatially organized memory is genuinely interesting — not just as a metaphor but also, for example, as a way to improve interpretability. But for now, it is clearly not production-ready.

Hindsight: A Modern Example of Good Agent Memory

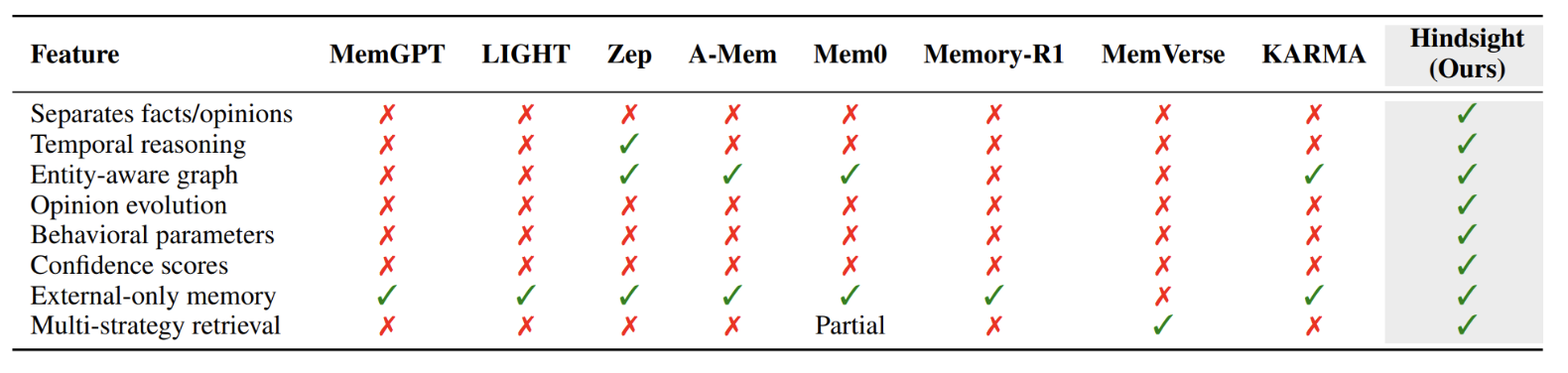

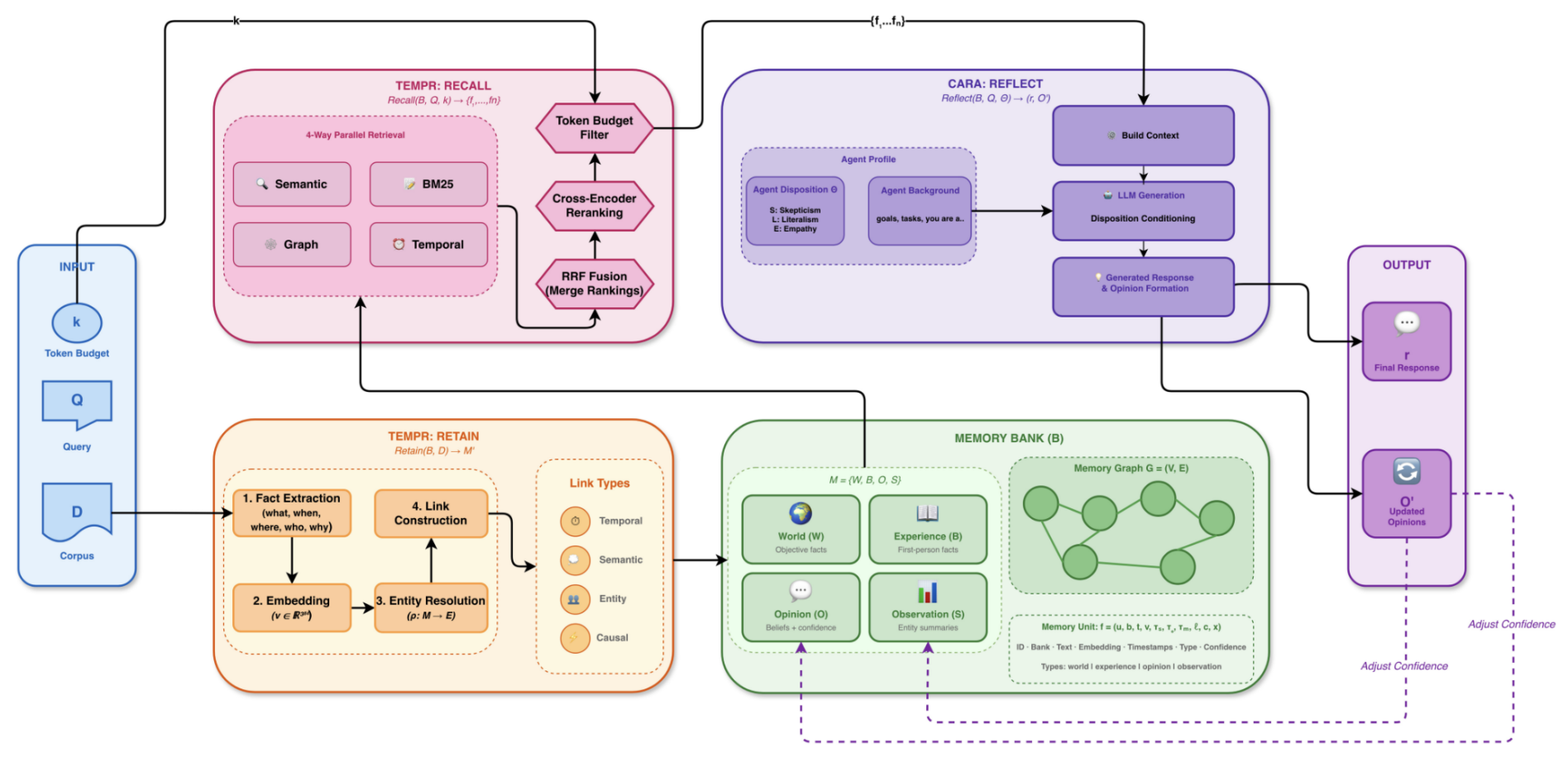

The system Hindsight (GitHub) is described in "Hindsight is 20/20: Building Agent Memory that Retains, Recalls, and Reflects" (Latimer et al., 2025). In my opinion, it is both theoretically interesting and practically strong, and I chose it for a detailed walkthrough because it illustrates many different components that appear individually in other systems.

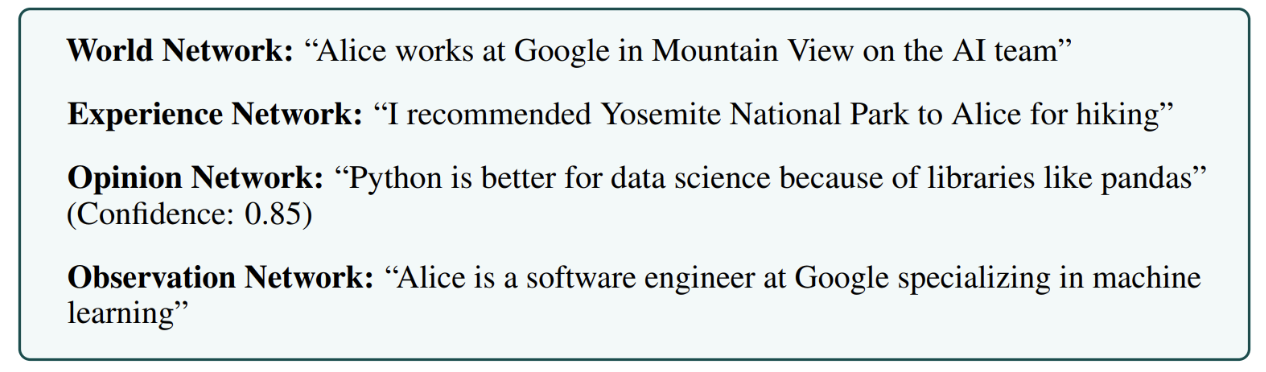

Four Memory Networks

Hindsight splits agent memory into four epistemically distinct networks:

- world network W — objective facts about the world: "Paris is the capital of France";

- experience network B — the agent's own first-person experience: "I asked Alice about X, she answered Y" or "I tried approach Z and it didn't work";

- observation network S — synthesized entity profiles built from W and B; these are meant to be relatively neutral and are automatically updated when new information arrives;

- opinion network O — subjective beliefs with a confidence score c ∈ [0, 1] and a timestamp: "I think Y is a reasonable idea (confidence 0.7, updated March)."

Think of it as the difference between a journalist's notebook (world facts), their personal diary (experiences), their source profiles (observations synthesized from both), and their editorial opinions. A good journalist keeps these distinct; so does Hindsight. When the agent reflects, it can revise its opinions without touching the underlying facts. And on a query, it can search "what do I know about X" separately from "what do I think about X".

This epistemological separation turns out to be important for the resulting performance. Other systems lump everything together, but Hindsight's architecture insists on asking what kind of memory unit you are dealing with, and this makes retrieval and reflection more reliable. Here is a high-level overview:

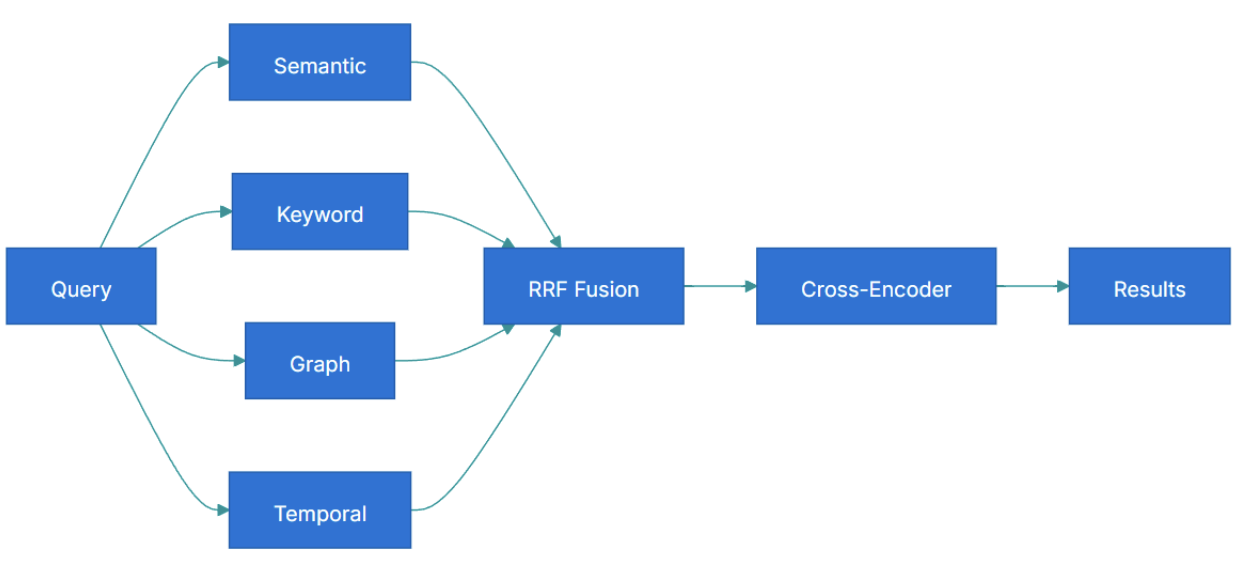

TEMPR: Parallel Multi-Strategy Retrieval with RRF

Recall in Hindsight is implemented through a component called TEMPR (Temporal Entity Memory Priming Retrieval). On each query, four retrieval strategies run in parallel:

- Semantic — cosine similarity via an HNSW index on pgvector.

- Keyword — standard full-text BM25.

- Graph — activation spreading over a graph where nodes are entities and edges are entity/temporal/semantic/causal links.

- Temporal Graph — rule-based and seq2seq date parsing, normalization of temporal expressions, and comparison of memory occurrence intervals.

Different questions need different strategies: "When did I last discuss X?" needs the temporal graph; "What do I know about Google?" needs the entity graph; "Which of my notes are similar in meaning to this idea?" needs semantic search; "When did we mention Kubernetes before?" is pure BM25.

Why not just use the best one? Because no single strategy is best for all queries, and you often do not know in advance which query type you are dealing with. Running all four in parallel and merging the results is more robust than betting on any one.

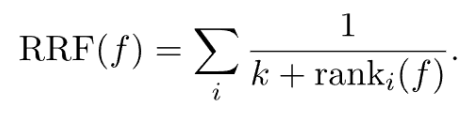

The four result lists are merged via Reciprocal Rank Fusion (RRF):

Here f is a specific memory unit, ranki(f) is its rank in the list from strategy i, and k is a smoothing constant (typically around 60). The idea is simple: a memory unit's contribution to the final score is inversely proportional to its rank in each strategy. A document ranked #1 in two strategies and #50 in the other two will still score highly, because the two high rankings dominate. The smoothing constant k ensures that the difference between #1 and #2 is not too dramatic, while the difference between #50 and #100 is negligible.

After RRF, results pass through a heavier cross-encoder reranker for final ordering:

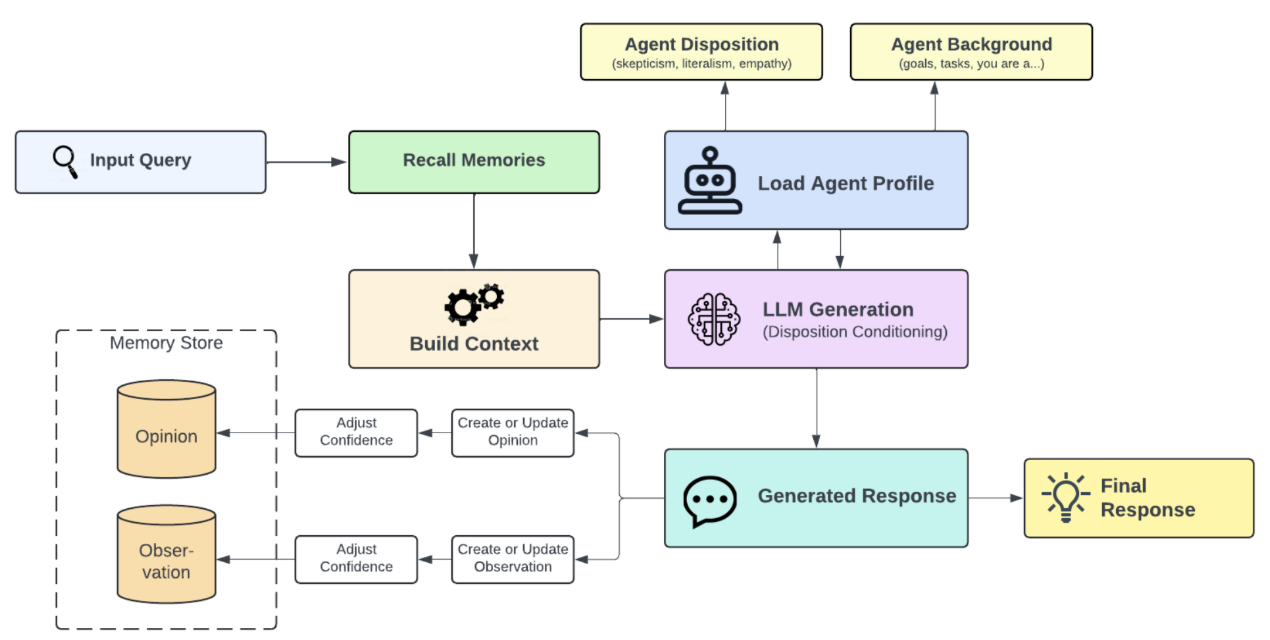

CARA: Reflect in Priority Order

Reflection in Hindsight is implemented through a component called CARA (Coherent Adaptive Reasoning Agents). When the agent wants to "think" about something, CARA:

- calls TEMPR to retrieve relevant memory units from all four networks;

- loads the agent's behavioral profile and background;

- generates a response, processing memory *in priority order*: opinions and observations (synthesized data) first, then raw world/experience facts. In other words, the system first checks whether it has already thought about this and reached conclusions, and goes back to raw facts only when necessary;

- forms new opinions with confidence scores;

- updates the opinion network.

This creates a real learning loop; the agent changes over time as it works, much like how a human analyst might start a project with no opinions, gradually form tentative views based on evidence, and eventually hold well-supported positions.

And here is the final overall Hindsight architecture, with all the components we have mentioned above:

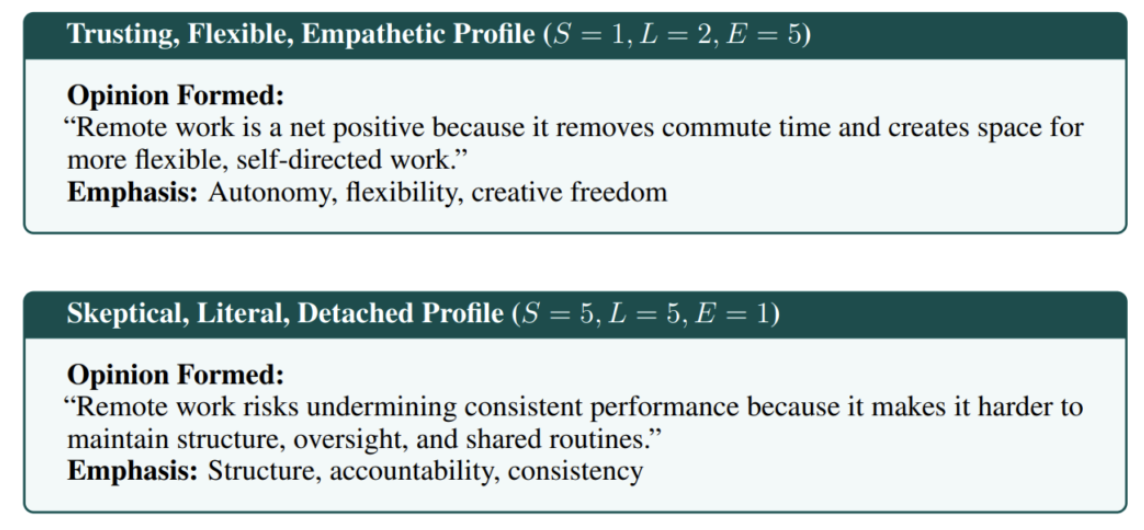

Disposition Parameters: Personality Affects Memory

One feature I find particularly interesting is disposition parameters. A Hindsight agent can be given three personality traits on a 1–5 scale:

- skepticism (1 = trusting, 5 = skeptical),

- literalism (1 = flexible, 5 = literal),

- empathy (1 = detached, 5 = empathetic),

plus a bias strength ∈ [0, 1] that controls how strongly these traits influence generation. In implementation, this is just part of the system prompt, but it has a noticeable effect.

The idea is that different projects need different "interpretation styles". For an analytical agent, you might set skepticism = 4, literalism = 4, empathy = 1; for a supportive assistant, skepticism = 2, empathy = 4. This changes how the agent weighs evidence during the reflect phase. From the same set of facts, agents with different dispositions will draw quite different conclusions — just as a skeptical journalist and an empathetic counselor would interpret the same conversation very differently.

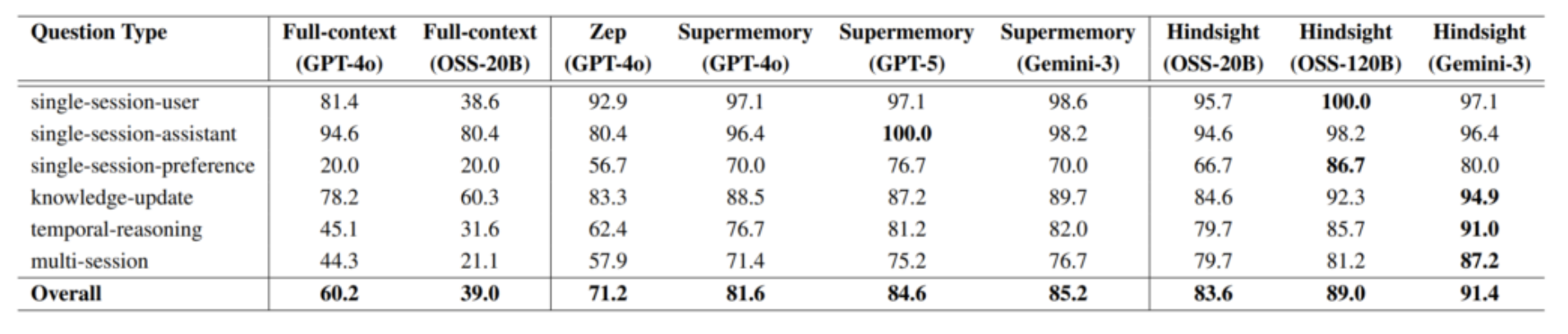

The Numbers

On LongMemEval (S setting, 500 questions), Hindsight with an open 20-billion-parameter model beats full-context GPT-4o by more than 23 points.

On LoCoMo, it wins again with a very large margin.

And on BEAM (the 10-million-token benchmark), it leads with 64.1% versus 40.6% for the next system (this is very recent news).

The headline here is not just that Hindsight takes first place, it is mostly in the size of the gaps: tens of percentage points practically everywhere. The "Memory in the Age of AI Agents" survey (Hu et al., 2025) evaluates Hindsight as the #1 system on the Agent Memory Benchmark (AMB) across LongMemEval, LoCoMo, BEAM, LifeBench, and PersonaMem.

So if you need something for production right now, Hindsight would be my recommendation.

Conclusions

Let me try to draw a few general lessons from all the work I have surveyed here.

First, retrieval strategy matters more than storage complexity. Hindsight wins benchmarks not because it has some fancy database, but because it has four retrieval strategies with RRF fusion. HippoRAG wins on multi-hop not because it has a sophisticated knowledge graph, but because it does one step of PPR instead of three LLM calls. Systems that rely solely on vector similarity consistently underperform.

Second, benchmarks vary wildly in what they measure. This is hardly news, but it is now documented quantitatively by MemoryArena: a system that scores 95% on LoCoMo in pure recall can score 40% when it needs to actually use the recalled facts in a real agent task. I am not sure we will close this gap soon, but at least MemoryArena and Mem2ActBench are asking the right questions.

Third, memory architectures are starting to learn. Memory-R1 is, in my opinion, the most important development of 2025–2026 in this space. It shows that a memory management policy can be learned from ~150 examples via downstream reward. The bio-inspired line (LightMem, SleepGate, EverMemOS) is moving in the same direction. I expect that within a couple of years, hand-written memory management strategies will gradually give way to learned ones.

Overall, this is one of the most interesting areas in applied LLMs right now. A year ago, it seemed like agents were bottlenecked by insufficient reasoning; six months ago, by tool use. Now I am increasingly convinced that the next critical bottleneck is memory. As for Milla Jovovich — well, she let us down. But at least she gave us a news hook.

.svg)