Late March 2026 was an interesting time for AI infrastructure. A Google Research blog post about a paper called "TurboQuant: Online Vector Quantization with Near-optimal Distortion Rate" (Zandieh et al., 2025) went viral; the paper had just appeared at ICLR 2026. Memory-chip makers such as Samsung, SK Hynix, and Micron lost 3–6% in a single trading day, and YouTube thumbnails started blaring things like "$450B Wiped Out – Google TurboQuant Just Crashed RAM Prices 30% Overnight".

It was all rather funny to watch, because the paper has been sitting on arXiv since April 2025, it is already a full year old. And the mathematics it stands on—Shannon's coding theorems, the Lloyd–Max algorithm, the Johnson–Lindenstrauss lemma—is older still, and eternal without any reservations. Today I’d like to unpack why this work is indeed beautiful and important, apart from the somewhat comical hype cycle that surrounded it recently.

This post will be on the long side, because I do not just want to summarize the paper; I want to put it in context. What is quantization, and why do we need it at all? Where does the theoretical machinery come from? And why does TurboQuant in particular manage to land so close to the theoretical limit?

Why We Need Quantization: The Problem in Context

Floating-Point Numbers Are Expensive

Let us start from the beginning. Neural networks store and process real numbers. The standard format today is float16 or bfloat16, i.e., sixteen bits per number. A model with 70 billion parameters is 70 billion such numbers, which already comes out to around 140 GB just for the weights. And during inference the model needs to hold intermediate computations as well.

The most important of those intermediate buffers is the KV-cache (key-value cache). To recall briefly how attention works in a Transformer: for each new token the model computes a query vector and compares it with all of the previous keys, in order to decide which parts of the context to pay attention to. The values are then weighted by those attention scores.

So that we do not have to recompute keys and values at every step, we store them in the KV-cache. The cache grows linearly with context length and is proportional to the number of layers and attention heads. Think of it like a court reporter transcribing a trial: every time a new witness speaks, the reporter must be able to flip back through all previous testimony to check for contradictions. The transcript grows with every word spoken, and the reporter needs it all in front of them at once. That transcript is the KV-cache: it is what lets the model "remember" everything it has read so far, and it gets heavier with every token.

For a 70B model with a 32K context, the KV-cache can eat up around 80 GB, which is actually more than the weights themselves. This is the main bottleneck for using LLMs, especially in any scenario that requires long context.

The Idea of Quantization

Quantization is the idea of replacing "expensive" real numbers with "cheap" small integers. Instead of storing 16-bit floats, let us store 4-bit or even 2-bit integers. Obviously we lose precision, but if we can keep the loss small, saving 4x to 8x on memory is a very good deal.

Formally, quantization is a pair of functions: an encoder Q: ℝd → {0,1}B and a decoder Q-1: {0,1}B → ℝd, where B = b·d is the total number of bits and b is the bit width (the average number of bits per coordinate). The goal is to minimize distortion:

This is just the mean squared error, that is, the reconstruction error.

In plain words, we are asking how much damage we do by rounding. If we round 3.7 to 4, the error is 0.3; MSE is just the average of all such squared rounding errors across the whole vector.

But for Transformer attention, MSE is not really what we care about. What actually matters is whether rounding messes up the comparisons between vectors — the inner products. Two vectors might be reconstructed with small MSE individually, yet the angle between them (which is what the inner product measures) could shift enough to redirect the model's attention to the wrong part of the context. So we introduce a second criterion that turns out to be even more important for attention:

i.e., error in the inner products. Attention weights in a Transformer are precisely inner products between queries and keys, so it is the inner product, not the raw vector, whose fidelity we actually care about.

Flavors of Quantization

Quantization comes in two broad flavors: scalar and vector. Scalar quantization means we quantize each coordinate of a vector independently; vector quantization means we quantize a whole vector at once, exploiting correlations between coordinates.

There is a second, orthogonal distinction: data-dependent (offline) versus data-oblivious (online) quantization. Offline quantization first looks at the data, builds codebooks from it, and only then uses those codebooks. The classic example is Product Quantization (PQ): chop each vector into subvectors, run k-means on each subvector position, then store only the indices of the nearest centroids.

The drawbacks of offline methods are obvious: you need a preprocessing pass, that pass is expensive, and if the data distribution changes (and the KV-cache changes at every generation step), then, strictly speaking, you would have to retrain everything.

Online quantization has to work without any training: you feed in a vector, you get back its quantized representation. For the KV-cache this is essential, because the cache gets a fresh vector at every generation step.

The Normalization Overhead Problem

One more subtlety makes KV-cache quantization nontrivial. Standard scalar quantizers work in blocks: take a block of g coordinates, compute its minimum and maximum (or mean and scale), split the resulting interval uniformly into 2b levels. But those normalization parameters, usually called "zero point" and "scale", have to be stored in full precision, one pair per block. With a block of 128 numbers and 2-bit quantization, that adds roughly 0.5-1 bit per number, which, it turns out, is a substantial overhead.

This is the problem that PolarQuant (which we will look at shortly) is trying to solve. TurboQuant eliminates the normalization overhead in a completely different way, through random rotations.

The Shannon Lower Bound: Why You Can Barely Do Better

Before we talk about any algorithm, let us establish how well we can possibly quantize at all. This is a beautiful piece of classical mathematics, and it is always a pleasure to revisit.

Shannon's Rate–Distortion Theorem

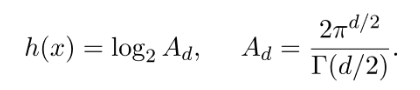

In 1959, Claude Shannon proved the rate–distortion theorem for lossy source coding. (Wikipedia has an article on his lossless source coding theorem but not on this one, though it is every bit as famous). The theorem says that for any random source x ∈ ℝd with differential entropy h(x), if we want to encode x using B bits, then the minimum achievable MSE is bounded below:

This is the Shannon Lower Bound (SLB), and it represents a fundamental limit that no algorithm can break, however clever, slow, and with however much preprocessing it works. Basically, Shannon's theorem says that if you have a budget of B bits total, this here is the best-case reconstruction quality you can ever hope for, no matter what compression scheme you dream up. It is a hard physical wall, like the speed of light in physics.

The proof goes through a so-called "backward Gaussian test channel": if we add to x an optimal Gaussian noise whose mutual information with x is at most B bits, then the MSE of any reconstruction is at least the quantity above.

Formally, if we let

where the infimum is over all joint distributions of (x, y) with bounded mutual information, then SLB says that

Specializing to the Unit Sphere

Now let us apply SLB to the case that actually matters for TurboQuant: suppose x is uniformly distributed on the unit sphere Sd-1. This is the key case because, as we will see, TurboQuant starts with a random rotation that makes any input vector uniform on the sphere.

The entropy of the uniform distribution on the unit sphere is the logarithm of the sphere's surface area:

Plug this into the SLB:

Now we need an estimate for Ad2/d. This is a slightly tedious derivation via Stirling's formula for the Gamma function, so let me skip it ,but at the and, for B = bd bits (that is, b bits per coordinate), we arrive at a clean and quite beatiful result:

Every extra bit per coordinate cuts the minimum achievable distortion by a factor of exactly four, and again, no algorithm on Earth (or out of it) can do better.

Let me emphasize this for a moment, because it is the sort of statement we rarely get to make in machine learning. Usually in ML, a new method "beats the previous state of the art by 2% on benchmark X". Here, we have a hard, non-negotiable limit coming from information theory that applies to every possible algorithm, including ones that have not been invented yet and never will be. It is a ceiling nobody can break. And, as we will see, TurboQuant pushes itself up against that ceiling within a factor of less than 3. That is a striking and unusual statement.

From MSE to Inner Products

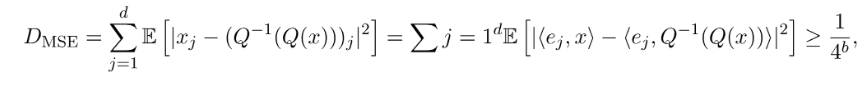

A simple trick extends this lower bound from MSE to inner products. Decompose MSE coordinatewise:

where ej are the standard basis vectors. Next we apply the pigeonhole principle, that is, the simple observation that if a total error of at least X is spread across d coordinates, at least one coordinate must carry at least X/d of that error. It is one of those ideas that sounds trivial yet turns out to be surprisingly powerful in mathematics. In this case, we conclude that there exists at least one coordinate j for which

This is the lower bound on the inner product error for y = ej:

Yao's Minimax Principle

One subtle point: the Shannon Lower Bound is proved for a fixed random distribution of inputs (uniform on the sphere). But what we actually want is a bound for the worst-case input against a randomized quantizer.

This is where Yao's minimax principle helps: the expected error of the optimal randomized algorithm on the worst-case input equals the expected error of the optimal deterministic algorithm on the worst-case random distribution of inputs. Since the uniform distribution on the sphere is one possible distribution, the SLB for it is also a lower bound for the worst-case scenario.

Here is the intuition: we proved our lower bound by assuming the input is random (uniform on a sphere). But a skeptic could say, "Sure, but maybe my particular vectors are easier to quantize than random ones." Yao's minimax principle shuts this down: it says that if no deterministic algorithm can beat the bound on random inputs, then no randomized algorithm can beat the bound on worst-case inputs either. The two problems are, in a precise sense, equally hard.

The end result is this. For any randomized quantizer Q with bit width b, there exists a vector x ∈ Sd-1 such that DMSE(Q) ≥ 1/4b.

PolarQuant: Polar Coordinates to the Rescue

Before we get to TurboQuant itself, let us look at PolarQuant (Han et al., 2025), another paper by the same group, which tackles the normalization-overhead problem in a completely different way. (A small warning: there is an unrelated concurrent paper by Wu et al., NeurIPS 2025, that is also called "PolarQuant" and also uses polar coordinates, but in a different setting; don’t mix them up.)

The Problem: Outliers and Normalization

Standard scalar quantization (KIVI is a typical example) works blockwise: take a block of g coordinates, compute its scale and zero-point, quantize. The trouble is that in the KV-cache of real LLMs, coordinates have outliers, specific channels with anomalously large values. A single outlier forces you to expand the quantization range for the whole block, which destroys precision for the rest of the coordinates. And the normalization parameters (scale, zero-point) have to be stored in full precision, which at 2–4 bits per coordinate can add 1–2 bits of overhead.

The Idea of PolarQuant

PolarQuant solves the problem in two steps.

Step 1: Random preconditioning. Multiply the vector x ∈ ℝd by a random matrix S ∈ ℝm×d with independent Gaussian entries. The result is subject to the Johnson–Lindenstrauss lemma. It is a very useful tool in high-dimensional geometry, which says that if you take a cloud of points in a very high-dimensional space and project it into a much lower-dimensional space using a random projection, all pairwise distances are approximately preserved. It sounds like magic—how can you throw away most of your dimensions and keep the geometry?—but it works because high-dimensional spaces are, in a sense, roomy enough that random directions are almost orthogonal to each other. So by the Johnson–Lindenstrauss lemma, this preserves norms and inner products up to a small distortion. But the key property is that after this multiplication, the vector Sx has a spherically symmetric Gaussian distribution N(0, ‖x‖2I). Outliers disappear, because all coordinates are now identically distributed.

Step 2: Switching to polar coordinates. Now the actual "polar" part. Instead of quantizing the Cartesian coordinates (x₁, x₂, …, xd), PolarQuant splits the vector into pairs (x₁, x₂), (x₃, x₄), …, and converts each pair to polar coordinates (r, θ):

If you have not thought about polar coordinates since high school: instead of describing a point by "how far right" and "how far up" it is (Cartesian coordinates), we describe it by "how far from the origin" (the radius r) and "which direction" (the angle θ). It is like giving directions as "500 meters northeast" instead of "350 meters east and 350 meters north." The two descriptions carry exactly the same information, but the polar form has a nice property for us: after the random projection, the angle and the radius behave in statistically well-understood ways.

Then the radii are themselves paired up and converted to polar coordinates, and so on, recursively, until you are left with a single final radius (essentially the vector's norm) and a set of angles.

Why This Works

After multiplying by a random matrix, the angles θ in the polar decomposition have a known, highly concentrated distribution. For a pair of independent Gaussian coordinates (x₁, x₂), the angle θ = arctan(x₂/x₁) is uniform on [−π, π].

At deeper levels of the recursion, when we take polar coordinates of pairs of radii (which have chi distributions), the distribution of angles becomes a scaled Beta distribution, sharply concentrated around π/4.

The authors derive the distribution of θ at every level of recursion analytically. For r₁ ~ χd₁, r₂ ~ χd₂, the angle θ = arctan(r₂/r₁) has density

This is a scaled Beta distribution on [0, π/2], and in high dimensions it is extremely concentrated, with a typical deviation from the mean of order O(1/√d).

What does that mean for us? The range of angle values is known in advance and does not depend on the data. There is no need to compute min/max for each block, no need to store scale and zero-point. The optimal quantizer for each angle can be precomputed once and for all, and the normalization overhead drops to essentially zero.

In full precision we only need to store the single final radius (the norm of the vector), that is, one number for the entire vector in dimension d = 128 or so, which is negligible overhead.

PolarQuant in Practice

On the Needle-in-a-Haystack benchmark (where the task is to retrieve a planted sentence from a very long document), PolarQuant gets 0.995 versus 0.997 for the full-precision model. That is meaningfully better than KIVI (0.981) and substantially better than pruning-based methods like SnapKV (0.858). The authors also report that generation becomes 14% faster than KIVI, and with better quality.

TurboQuant: The Key Ideas

Now to our main hero. TurboQuant is really an umbrella method that combines ideas from PolarQuant and QJL into a single pipeline with formal optimality guarantees. Strictly speaking, the TurboQuant paper itself does not use polar decomposition—it uses coordinatewise quantization after a rotation, which is simpler and faster—but the philosophy is the same: a random transformation takes you to a known distribution, which lets you use a precomputed codebook and drive the overhead to zero.

Step 0: Normalization

First, the vector is normalized: its L2-norm is stored separately as a float, and the vector itself is projected onto the unit sphere. This is a standard and harmless trick; restoring the scale at decoding time is trivial.

Step 1: A Random Rotation

This is the key trick. Multiply the vector by a random orthogonal matrix Π ∈ ℝd × d obtained from the QR decomposition of a random Gaussian matrix. The resulting vector Πx is uniformly distributed on the unit sphere Sd-1, regardless of what the original vector x was.

What does "uniformly distributed on the unit sphere" mean intuitively? Imagine throwing a dart at a randomly spinning globe with your eyes closed. The dart is equally likely to land anywhere. That is what happens to our vector after the random rotation: all directional structure is washed away, and every direction becomes equally likely.

Why do we want this? Because after the rotation, each coordinate of the rotated vector has a known distribution. And that’s why we can use a single, universal codebook, and we don’t need to know anything about the original vector's structure. Here is the precise statement.

Lemma (distribution of sphere coordinates). If x ∈ Sd-1 is uniform on the unit sphere, then for every coordinate j ∈ [d]:

This is a rescaled Beta distribution. For large d (and in practice d is typically 64, 128, 256, …) it is approximated very well by N(0, 1/d).

There is one more important fact, deeper than it looks: in high dimensions, the coordinates of the rotated vector become almost independent. This is a nontrivial result in probability theory, so we will not discuss it in detail, but it is precisely this near-independence that lets us quantize coordinates separately and lose almost nothing.

Step 2: Optimal Scalar Quantization (Lloyd–Max)

Since the coordinates are (nearly) independent and their distribution is known, we can quantize each coordinate separately using the optimal scalar quantizer. This reduces to a one-dimensional, continuous k-means problem: partition the interval [−1, 1] into 2b clusters, minimizing the expected squared deviation from the nearest centroid:

The optimal solution satisfies two conditions:

- cluster boundaries are midpoints between adjacent centroids (1D Voronoi partition);

- centroids are conditional expectations of fX on each cluster.

If you are familiar with k-means clustering: imagine running k-means on a single number line, where your "data points" are drawn from a known bell-curve distribution. You want to find the best 2b representative values (centroids) so that each number gets rounded to its nearest representative with as little error as possible. This is precisely the problem solved by the classical Lloyd–Max algorithm from the 1960s (Lloyd, 1957/1982; Max, 1960), which iteratively updates boundaries and centroids.

One can literally compute the exact numbers here. The codebooks depend only on the dimension d and the bit width b, not on the data. They can be computed once and stored. No calibration, no training, no data-dependent tuning of any kind.

The quantization procedure is then trivial: for each coordinate of the rotated vector, find the nearest centroid and store its index as a b-bit integer. Decoding reconstructs the centroids from the indices and rotates back via Πᵀ.

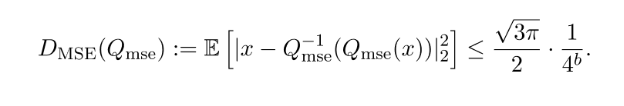

We can now give the precise statement of the main theorem, the MSE guarantee for TurboQuant.

Theorem. For any bit width b ≥ 1 and any vector x ∈ Sd-1, the MSE-optimal TurboQuant Qmse : ℝd → {0,1}bd satisfies

For small b the results are even better, and for b = 1, the gap to the Shannon lower bound is only about 1.44, which is practically optimal.

The Bias Problem: Why We Need a Second Stage

So the problem seems solved: Qmse gives near-optimal MSE. But for Transformers we do not actually care about MSEб we care about inner products ⟨y, x⟩. And it turns out that MSE-optimal quantization is biased when we use it to estimate inner products.

Why? Consider the simplest case, b = 1. The optimal quantizer at large d is essentially just the sign function, and its dequantized values are ±√(2/(πd)). This means that the estimator of the inner product picks up a multiplicative bias of 2/π ≈ 0.637, that is, every attention weight gets systematically underestimated by about 36%.

For example, suppose the true attention weight between a query and a key is 0.8. After 1-bit MSE quantization, our estimate would be about 0.8 × 0.637 ≈ 0.51, far from a small rounding error! And it affects every inner product in the same direction, so the model would systematically underweight all of its attention, making its outputs mushier and less focused.

The bias shrinks as b grows, and tends to zero with b going to infinity, but low bit widths are exactly the regime we care about, and there the bias is quite serious.

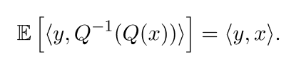

So for nearest-neighbor search, and for attention in Transformers, we want an unbiased estimator:

To get it, we need another important component of TurboQuant: the Quantized Johnson–Lindenstrauss method (Zandieh et al., 2024). The idea is simple: take a random Gaussian matrix S ∈ ℝd × d, project x, and take the sign:

There are theoretical guarantees here too, and in addition to unbiasedness there is also a theoretical bound on the variance. Again, the bounds themselves go out of scope for my post, but as a result, we have a method that uses one bit per coordinate, requires no training, and admits rigorous probabilistic guarantees.

TurboQuant Proper

Now the actual TurboQuant method combines both ingredients. To get bit width b, we do the following.

- Apply Qmse with bit width b−1, which gives a good reconstruction.

- Compute the residual: r = x − Qmse-1(Qmse(x)).

- Apply QJL to the residual: store sign(S · r) and ‖r‖.

In total we spend b bits per coordinate: b−1 bits on the MSE part plus 1 bit on QJL. At decoding time, we add both pieces: the reconstruction from the MSE quantizer plus the scaled QJL reconstruction of the residual. And TurboQuant also has nice theoretical guarantees.

Theorem 2 (inner-product quality). For any bit width b ≥ 1 and any x ∈ Sd-1, y ∈ ℝd, TurboQuant gives a map Qprod whose output x̃ satisfies:

- unbiasedness: E[⟨y, x̃⟩] = ⟨y, x⟩;

- distortion bound: Dprod := E[|⟨y, x⟩ − ⟨y, x̃⟩|²] ≤ (√(3π)/2) · (‖y‖²/d) · (1/4^b).

Again, we can compute specific bounds:

Okay, the theory is nice, but what happens in practice?

Experimental Results and Next Steps

KV-Cache

On the Needle-in-a-Haystack benchmark, TurboQuant with 4x compression gets exactly the same score as the full-precision model, 0.997. For comparison, here are other methods:

On LongBench (a benchmark suite for long contexts), Llama-3.1-8B-Instruct with 3.5-bit TurboQuant scores 50.06, identical to the full-precision model; at 2.5 bits it scores 49.44, which is nearly imperceptible degradation. And the compression ratio is over 4.5x.

The non-integer bit widths (2.5, 3.5) arise from an outlier-allocation strategy: 32 "outlier" channels are quantized with higher precision (3 bits) and the remaining 96 channels with lower precision (2 bits), giving (32·3 + 96·2)/128 = 2.5 on average.

The authors also show attention speedups on an H100: 4-bit TurboQuant is up to 8x faster than the 32-bit baseline.

Nearest Neighbor Search

TurboQuant beats both Product Quantization and RaBitQ in recall across all tested datasets (GloVe at d = 200, OpenAI embeddings at d = 1536 and d = 3072). And the indexing time is essentially zero (0.0013 seconds for 100K vectors at d = 1536), because the codebooks are precomputed, unlike PQ, which has to run k-means (239.75 seconds for the same workload).

What the Community Has Been Doing

Once the paper—published, as I have already mentioned, in April 2025—finally got its well-deserved attention, the community piled in and started reproducing results and pushing further. Within a week of the Google blog post there were implementations in PyTorch, Rust, MLX (for Apple Silicon), and Triton, and a thirty-plus-person discussion kicked off in llama.cpp.

A few interesting practical findings have emerged already.

1. MSE-only is often better than MSE+QJL for attention. Several independent teams have now confirmed this. The reason is that QJL removes bias but adds variance, and softmax amplifies variance: errors in inner products are exponentiated, and noise on a single attention weight can drag an entire head. In the regime of b = 2-4 with typical head dimensions (d = 64, d = 128), MSE-only gives better top-1 token matching. This does not actually contradict the theory — TurboQuant's theoretical analysis optimizes the MSE of the inner product before softmax, whereas the practical metric is top-1 accuracy after softmax, which is a different quantity.

2. Keys and values should be quantized differently. It turns out the norms of keys and values differ substantially (in Qwen-style models, by up to 100x). Errors in keys translate directly into errors in attention weights, while errors in values get averaged out. The best strategy, for a fixed bit budget, is to give keys more bits (say, 4) and values fewer (say, 2).

3. Update (late March 2026): the combination Walsh–Hadamard Transform + QJL + MSE, where MSE and QJL use independent random transforms, has emerged as the best strategy. The issue with early implementations was using the same random matrix for both stages, which induced correlations. With independent projections, QJL does help.

A Different Approach: KVTC from Nvidia

TurboQuant is not the only KV-cache compression paper at ICLR 2026. Nvidia presented KVTC (KV Cache Transform Coding; Staniszewski, Łańcucki, 2025), which achieves 20x compression with less than 1% quality loss. Their approach is completely different: PCA decorrelation (computed on a calibration dataset) plus adaptive quantization plus entropy coding (DEFLATE). Fundamentally, it is the classical transform coding method, adapted to KV-caches.

If you have ever saved a photograph as a JPEG, you have used transform coding: the image is split into 8×8 blocks, each block is transformed (via a DCT), and then the transform coefficients are quantized — with more bits for the important, low-frequency parts and fewer bits for the fine detail your eye cannot see anyway. KVTC does the same thing to KV-cache vectors, but with PCA instead of DCT, and with learned bit allocation instead of JPEG's fixed tables.

KVTC exploits the low-rank structure of the KV-cache. It turns out that KV tensors are highly correlated, and PCA lets us identify the principal components and allocate more bits to them, giving zero bits to the tail and effectively discarding it. This yields much higher compression, but at the cost of making KVTC an offline, data-dependent method: the PCA matrix has to be computed on calibration data (~200K tokens on an H100), and it is specific to each model.

These are two very different approaches, and they do not compete so much as complement each other. TurboQuant is an elegant theoretical work, data-oblivious, provably close to optimal, and it is a plug-in method with zero setup. KVTC is a more engineering-oriented method that uses the structure of the data and sacrifices universality in exchange for much stronger compression.

The reader might wonder at this point: if TurboQuant is already close to the Shannon limit, how can KVTC be substantially better? The answer is that the Shannon limit we derived holds for input vectors in the worst case. KVTC, by training on calibration data, learns the structure of real workd KV-caches, which are naturally very far from the worst case; they have low-rank structure and correlations between coordinates, and KVTC can exploit it.

Conclusion

There was simply no way to walk past the TurboQuant story without a post.

For one thing, this is the kind of result where eternal mathematics—information theory and some fairly deep probability theory—leads directly to a state-of-the-art result on a problem of genuine practical importance. There is no meta-model to be trained, no reinforcement learning, no clever architecture or engineering tricks: just a random rotation, a sixty-year-old scalar quantizer, and a one-bit correction on the residual.

Second, the result is provably near-optimal. In the world of new models that are "on average 5% better on benchmark X than the previous SotA", it is always a pleasure to see a method that mathematically does no more than 2.7x worse than any possible algorithm.

Third, it is a data-oblivious algorithm that works online. Its codebooks depend only on the dimension and the bit width, and the same quantizer works for any model. For deployment, this is a significant practical advantage: TurboQuant can slot into a standard model-agnostic stack.

Fourth, this story again vindicates an old saying that goes back at least to the 1880s: "In theory, there is no difference between theory and practice; in practice, there is". Practical implementations immediately revealed that the QJL stage, theoretically necessary for unbiasedness, can actually hurt in practice, or that keys and values need to be quantized asymmetrically.

Finally, the very fact that KV-cache compression is approaching the Shannon limit means that the race for compression in this particular direction is nearing its end. Within the data-oblivious paradigm, you cannot squeeze much more, so further progress will have to come from data-dependent methods (like KVTC), hybrid approaches, or possibly completely new paradigms we have not seen yet.

Meanwhile, mathematics really is eternal. In this post we referenced work by Shannon (1948, 1959), Lloyd (1957) and Max (1960), Johnson and Lindenstrauss (1986), and these results turned out to be the backbone of the hottest engineering news of March 2026. Math is forever, and the rest is commentary.

.svg)